The truth is, AI in healthcare often seems more impressive on paper than in practice. The articles, research papers, and press releases proclaim the arrival of a “new paradigm,” a “revolution,” a “tsunami” that’s “overwhelming” doctors and patients alike. But when you ask someone what it’s like to actually work in a hospital or clinic today, you get a different story.

Table of Contents

It’s not all talk and no action, of course. There are some very real changes underway, and more to come. But if you’re expecting AI to upend medicine anytime soon, you’ll likely be disappointed. The real story of AI in healthcare is a more nuanced one, of marginal gains, surprising applications, reluctant converts, and, yes, some remarkable advances.

This article isn’t here to sell you on a dream. It’s here to explore what’s actually happening in the field: the promising areas, the areas of pushback, the leaders and the laggards, and what it all means for care providers and patients in a health care system that’s already under considerable strain.

1. The reality of AI in health care today

Step into a hospital today, and you won’t find robots making rounds like you might have imagined a decade ago. Look a bit harder, though, and you’ll find AI embedded in the routine: the computer screen that brings up your X-rays, the program that books your appointments, even the chatbot that won’t leave you alone on the hospital website.

That’s all AI. Yet here’s the surprise: for all the attention they’re getting, the technologies aren’t as widely used as you might think. While 60% to 70% of health-care providers are exploring AI’s potential, according to a recent report by the consulting firm McKinsey, only a small fraction have broadly adopted AI applications. It’s a little like everyone has bought a gym membership, but relatively few are actually going.

Where AI is making inroads

At its core, AI works best when there are lots of data and lots of patterns to detect. So where is AI being used effectively in health care?

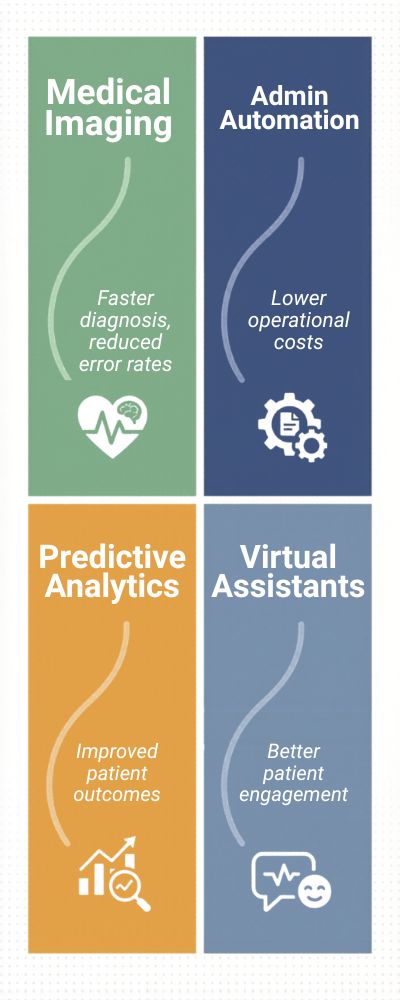

- On medical images like X-rays and MRIs, to help identify abnormalities.

- On administrative tasks, like billing and scheduling and filling out patients’ charts.

- To analyze data and predict which patients are most likely to have problems.

A study published in Nature in 2019 found that AI systems could analyze images as accurately, or even more so, than a human doctor. But that was under idealized conditions. The real world can be different.

| Area of Use | Adoption Rate (%) | Reported Impact |

|---|---|---|

| Medical Imaging | 75% | Faster diagnosis, reduced error rates |

| Admin Automation | 85% | Lower operational costs |

| Predictive Analytics | 60% | Improved patient outcomes |

| Virtual Assistants | 50% | Better patient engagement |

The results are impressive on paper. Ask doctors, however, and they might shrug and say, “It works, but it’s no miracle cure.” That sort of thing.

The Inconvenient Truth We Don’t Like to Discuss

Now, let’s get a little real. AI isn’t something you simply download to your healthcare smartphone app. It collides with data quality issues, patient-privacy protocols, and, let’s just say it, a certain level of distrust.

That’s not to say physicians are Luddites. They’re merely careful. When an algorithm recommends something counterintuitive, someone still has to be accountable for that decision. And that someone almost always has a medical license to lose.

A JAMA study on physician attitudes toward AI found that, although doctors believe AI can be useful, more than 40% expressed concerns about the technology’s reliability and accountability. That’s not pushback, that’s prudence.

So … Is AI Actually Working or Not?

The short answer is yes … sort of.

AI performs wonderfully in limited contexts and inconsistently in others. It excels when it enhances the work of healthcare professionals, not replaces them. Perhaps that’s the nuance we’ve been overlooking all along.

In a weird way, that’s comforting, too. Healthcare is an inherently human endeavor, messy, emotional, unpredictable. AI certainly has a role to play. But it doesn’t offer a reassuring hand on the shoulder or deliver difficult news with empathy. At least, not yet.

If anything, I think the real headline here isn’t that AI is revolutionizing healthcare; it’s that AI is gradually, clumsily finding its way into healthcare, much like the new employee who’s really smart, occasionally awkward, but figuring things out a little more each day.

2. What’s actually happening in AI health care today

Looks nice in a chart, doesn’t it? Except if you ask someone in practice, they’re probably going to say, “Yeah, it’s useful… when it works.”

The Human Element (Which Doesn’t Fit on a Dashboard)

One thing you don’t see in a report? People are still trying to decide how much they can trust these things. You don’t just hit “accept” on an AI recommendation like it’s a software update. There’s a pause. A sense of accountability. A hint of “if this thing screws up and I have to explain it to the family…”

A JAMA Network study on trust in AI found that many clinicians are still careful with AI use, particularly when it comes to critical care decisions. Not pushback, just… a cautious attitude. And fair enough. Healthcare isn’t exactly a field where you can trust blindly in a black box.

So, Where Does That Leave Us?

Somewhere in between. AI isn’t curing cancer (yet), but it’s not all hot air either. It’s doing valuable, sometimes mundane, sometimes clever work. And maybe that’s the real news story here: AI in healthcare isn’t sexy, it’s functional. It’s improving people’s lives in the background, saving time, catching issues early, making systems a little less dysfunctional. It’s not a revolution. More like a software patch you only appreciate when it’s gone.

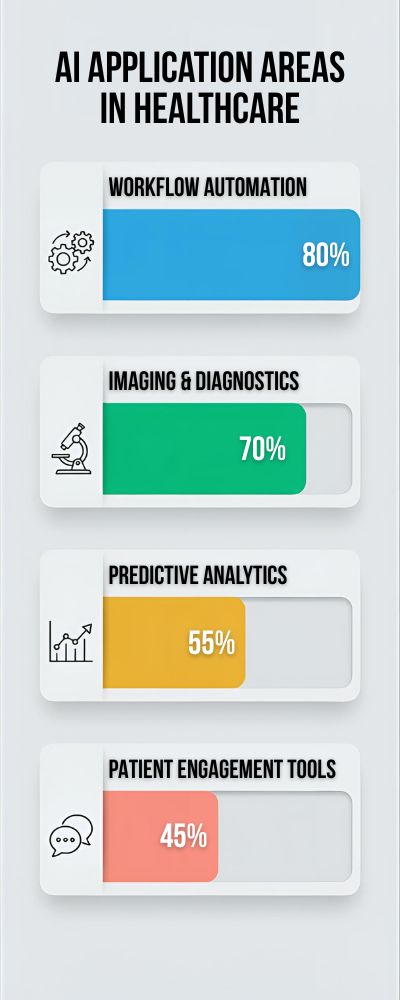

| AI Application Area | % of Organizations Using It | What It Actually Improves |

|---|---|---|

| Imaging & Diagnostics | 70% | Faster detection, triage |

| Workflow Automation | 80% | Reduced admin burden |

| Predictive Analytics | 55% | Early risk identification |

| Patient Engagement Tools | 45% | Communication & follow-ups |

The Human Element (Missing From the Dashboard)

The one thing not reflected in the reports, however, is that people are still learning to trust these systems. A physician isn’t pressing “okay” on an AI-generated recommendation as they might with an operating system update. There’s a hesitation. A responsibility. A touch of “this could go wrong and I have to tell the family.”

A recent study published by JAMA Network on trust in AI found that many clinicians remain fairly conservative, especially when it comes to life-or-death decisions. Not resistant, mind you, just … careful. And I don’t blame them. Medicine probably isn’t the ideal setting for blind faith in a black box.

So, Where Are We?

Somewhere in between. AI isn’t saving the world (not yet), but it’s not all hype either. It’s doing actual work, sometimes boring, sometimes brilliant. And perhaps that is the real headline: AI in medicine is not exciting; it’s practical. It’s helping people behind the scenes, saving a bit of time, picking a few things up a bit earlier, making things a bit less clunky. Not transformative. More like an operating system update you only notice when you lose it.

3. Surprising use cases in AI for health care

You say “AI in healthcare” and most people imagine robots performing operations or computers interpreting X-rays. That makes sense. Those are the headline acts. However, some of the most intriguing applications are far more subtle, almost bizarrely niche. They’re things you might not imagine applying AI to until you see them in action.

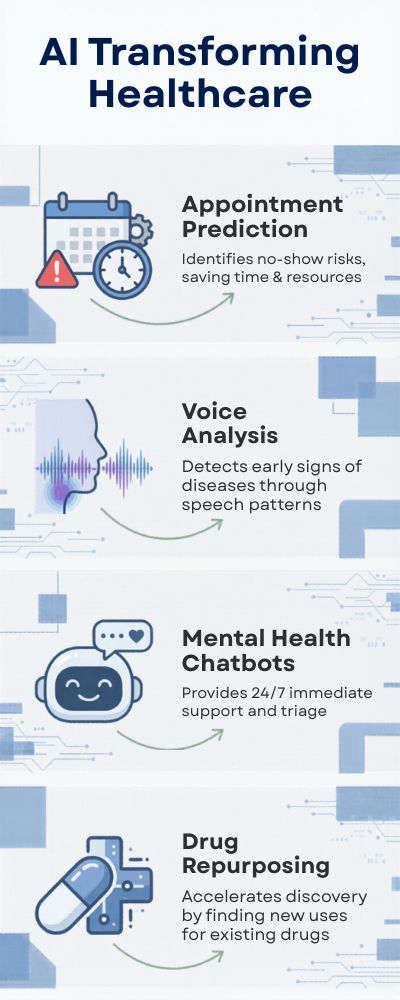

For instance, consider hospital scheduling. Not very exciting, is it? Except failed appointments cost healthcare providers billions of dollars. Research published by the NCBI on predictive scheduling suggests AI can reduce no-show rates by as much as 30%. That may not be sexy but it saves time, money, and quite frankly, a lot of headaches.

Listening AI (Literally)

This is something that feels slightly science-fiction, yet is already a reality. AI software analyzing patients’ speech patterns for early signs of neurological diseases, such as Alzheimer’s or Parkinson’s.

Research published by Nature Digital Medicine has demonstrated that tiny changes in speech patterns — in the way you pause, the tone you use, or even your choice of words — can be a sign of early cognitive decline, often years ahead of a formal diagnosis.

It’s a bit strange when you think about it. The way you chat to someone becoming a diagnostic tool.

Mental Health Support That Never Sleeps

This is one that’s a little more contentious. AI chatbots that offer mental health support. Many people dismiss the idea outright — understandably — yet they are more prevalent than you might think.

According to a WHO report on digital health interventions, AI-powered tools are increasingly being used to improve access to mental health services, particularly in areas with a shortage of trained therapists.

Are they a substitute for human interaction? Absolutely not. Not even close. But if someone needs to talk to something at 2 a.m., they are better than nothing. Sometimes, that’s more important than we like to admit.

| Use Case | What AI Is Doing | Why It Matters |

|---|---|---|

| Appointment Prediction | Identifies no-show risks | Saves time & resources |

| Voice Analysis | Detects early cognitive decline | Earlier intervention |

| Mental Health Chatbots | Provides 24/7 support | Expands accessibility |

| Drug Repurposing | Finds new uses for existing meds | Faster, cheaper treatments |

AI for Drug Repurposing: The Unsung Hero This application doesn’t get the attention it deserves. AI can be used to repurpose existing drugs, taking years off the drug development process.

In the context of COVID-19, AI was used to identify potential drugs to fight the disease faster than the traditional approach could. This paper in Nature Machine Intelligence highlighted how AI accelerated drug discovery pipelines significantly.

It’s not dramatic, but when every day counts, saving a few months matters.

Why don’t we hear more about this?

Because it’s boring. No one is posting “AI optimizes physician scheduling slots!” or “AI analyzes speech patterns!”

But these are the use cases that quietly stack up. Small wins. Real impact. And maybe that’s the nature of AI in healthcare, as it’s a collection of small victories. Nothing to tell over dinner, but if you’ve ever waited weeks for an appointment, you might appreciate the small victory.

4. AI vs. physician

“Will AI replace doctors?” It’s the kind of question that’s exciting, drives traffic, and… doesn’t really nail the question. When you really think about it. Replace them how? Diagnose? Treat? Communicate with patients? Have the tough conversations? That’s a lot of very different tasks lumped into a single job title.

And most of the evidence doesn’t even point to replacement. According to one analysis of AI in healthcare by McKinsey, AI could potentially automate 10-20% of the tasks a physician performs, which would mainly include administrative duties. Not the human part. Not even close.

Where AI Does Beat Humans (Yes, It Happens)

Alright, let’s be fair. There are certainly specific, niche tasks in which AI outdoes physicians, particularly in identifying patterns. For example, a study published in Nature found that AI was equal or superior to expert radiologists in detecting breast cancer on mammography images.

That’s big. That’s important. And it’s under ideal conditions. With perfect data, and a clear goal, and no messy hospital environment, and no missing information, and no time constraint. So yes, AI can perform better under the right circumstances. Life isn’t a controlled environment.

A Quick Side-by-Side

| Capability | AI Systems | Physicians |

|---|---|---|

| Pattern Recognition | Extremely high (data-driven) | Strong, experience-based |

| Speed | Instant | Fast, but limited by workload |

| Context Understanding | Limited | Deep, nuanced |

| Emotional Intelligence | None | Essential |

| Accountability | Indirect | Direct responsibility |

Looks great on paper. Reality is a bit trickier.

The Bit You Don’t See in the Benchmarks

It’s one of those underappreciated issues that patients don’t just want to be right. They want comfort. Understanding. A little understanding when the situation is ambiguous or terrifying. Your AI can be as accurate as you like. If it can’t deliver a diagnosis without explanation or humanity, that’s a failure.

A JAMA Network Open study discovered that patients trusted treatment recommendations more when they came from a human. Even when AI was involved behind the scenes. That tells you something. Because trust isn’t just about accuracy. It’s about trust.

Rivalry or Partnership?

Frankly, I think the “AI vs. physician” trope is a bit old-fashioned. This isn’t a zero sum game. This is more a partnership we haven’t figured out how to use yet. AI handles the busy work. Data. Pattern recognition. Repetition. Doctors handle the decisions. The interpretation. All the fuzzy grey stuff that drives algorithms nuts.

If anything, the real disruption isn’t replacement. It’s a rebalancing. Doctors filling out fewer forms and doing more actual doctoring. Maybe that’s what we should be aiming for. Not AI in the driver’s seat. But AI in the backseat, while we do what we’re really good at.

5. AI and medical imaging

AI in medical imaging is one of the few places where AI lives up to the hype. But it’s not in a cool, futuristic way. It’s more of a “this is actually kind of cool, but in a boring way” way.

After all, what is a radiologist, if not a pattern recognizer? They look at images, spot anomalies, and make diagnoses based on those anomalies. AI can do that, too, and it’s actually pretty good at it. Given enough images to look at, an AI algorithm can spot anomalies that might have been missed by an overworked radiologist.

In one Nature study, AI performed as well or better than human radiologists when it came to detecting breast cancer in mammography images. Not only that, but the AI made fewer false positives and false negatives. That’s a clear win, right? Well, sort of. Yes, AI is really, really good at medical imaging. It’s fast, accurate, and it never gets tired.

In one Radiological Society of North America study, AI-assisted image interpretation was able to reduce image reading time by up to 30%. That’s huge, especially when you’re working in an overburdened ER. But there’s a catch: AI doesn’t really understand the context of what it’s looking at. It’s looking at an image of a tumor, not an actual tumor. And there’s a difference.

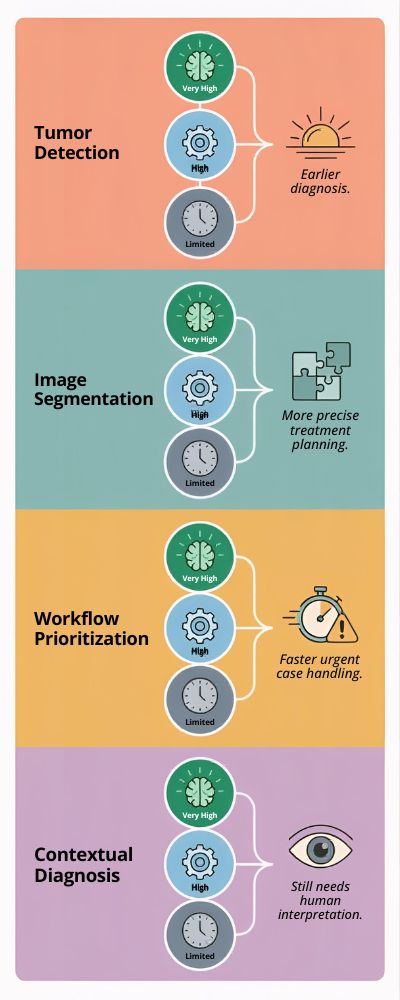

| Imaging Task | AI Strength Level | Real-World Impact |

|---|---|---|

| Tumor Detection | Very High | Earlier diagnosis |

| Image Segmentation | High | More precise treatment planning |

| Workflow Prioritization | High | Faster urgent case handling |

| Contextual Diagnosis | Limited | Still needs human interpretation |

Clean table, but reality? A bit more messy.

The “Second Pair of Eyes” Effect

Radiologists often mention this when asked about AI. It’s a second pair of eyes. Not a decision maker. More like an intern who never sleeps but lacks the big picture.

And that’s where it helps. AI thinks it sees something, the human reviews, and hopefully, links it to history, symptoms, and all the complex human factors.

I’ve read at least one study from JAMA on AI-assisted diagnosis that shows that human and AI together makes better decisions than either. Which, to be honest, seems obvious.

So… Should We Trust It?

That’s the question, isn’t it?

Trust AI? No. Not trust it at all? Nope.

Somewhere in the middle seems right. Use it as a tool. Allow it to do its thing, but have a human overseeing who can say, “Wait, does this really apply here?” Because an image is only one data point. In healthcare, there’s never just one data point.

6. Invisible ways in which AI is improving health care

A lot of what AI does in healthcare isn’t sexy. It doesn’t make for front-page news, or even a “holy cow!” moment. It’s the kind of thing you don’t even notice until it’s gone. Like when a server crashes and you realize how much it was actually doing in the background.

For example: workflow. AI is working behind the scenes in hospitals all the time, tweaking schedules, shifting staff around, modeling patient movement. A report from McKinsey on healthcare operations found that AI-optimized scheduling can cut down patient wait times by 15 to 25%. Not super exciting, but you try sitting in the waiting room for hours and see how you feel about it.

Slashing Paperwork (at Last)

If you ask any given physician what their biggest time commitment is, you’ll probably get the same answer: paperwork. Tedious, mind-numbing, soul-sucking paperwork.

And that’s where AI comes in. Programs that can do everything from transcribe doctor/patient conversations to filling out patient records to recommending billing codes. A report from Health Affairs found that docs spend roughly half their time on administrative tasks, and that AI can significantly cut down on the time spent on paperwork.

Not eliminate it, mind you. I’m not making any promises that it’s a paperwork panacea. But just cutting it down to size? That’s time that docs can spend with patients. Or hell, just time to grab a drink of water.

Here’s a quick rundown of the “unsung heroes” of AI in healthcare:

| Area | What AI Does Quietly | Why It Matters |

|---|---|---|

| Scheduling Optimization | Predicts patient flow | Shorter wait times |

| Clinical Documentation | Automates note-taking | Less burnout for doctors |

| Resource Allocation | Balances staff & equipment usage | Smoother operations |

| Early Risk Alerts | Flags subtle warning signs | Prevents complications |

It’s easy to understand. It’s much harder to live.

How AI Can Help

I don’t think this aspect of AI gets enough play. AI as the canary in the coal mine. AI as the first warning sign. AI as the guard against human error, against a bad day, against a distraction at the wrong moment. AI can help identify a patient who is circling the drain before any human even realizes there is a problem. And it can do it hours before there are overt signs that something is wrong.

As this study in Nature Digital Medicine found: “Predictive models can identify hospitalized patients who will deteriorate hours before clinicians recognize overt clinical signs.” That is what it’s all about. That is how you save lives. That is how you reduce the risk of adverse events. You catch them before they happen. You catch them before they’re a problem.

Sometimes AI isn’t about a step-change in outcomes. Sometimes AI is just about making things a little bit easier. Saving someone 10 minutes on a shift. Avoiding a miscommunication. Not having to wait for someone to get a drug. There’s no way to overstate the importance of these sorts of incremental gains.

It’s the little things that can kill you. It’s the little things that will eat away at you. It’s the little things that can make or break your day. AI can fix a lot of those little things. And that’s nothing to sneeze at. Sometimes you don’t need to make a leap. Sometimes you just need to clear the hurdles.

7. AI cost savings in health care

Healthcare is expensive. No surprises there. What’s more surprising is just how much of the expense is just simple inefficiency. Missed appointments. The need to run the same tests over and over. Administrative headaches. Slow diagnoses. This is where AI starts saving its own way, not immediately, not completely, but just enough to get noticed.

According to one report by Accenture, AI-enabled healthcare applications could save the U.S. healthcare system up to $150 billion annually by 2026. That’s a big number, but you almost have to squint at it and ask where, exactly, all those billions are coming from.

The Small Fixes That Add Up

It’s not usually a dramatic, headline-grabbing breakthrough. It’s just a hundred little things, done a little bit better.

- Fewer unnecessary tests

- More intelligent scheduling

- Faster diagnoses

- Automated paperwork

A study by Health Affairs discovered that administrative complexity alone accounts for around 25 to 30% of total healthcare spending. Which seems, when you really step back and think about it, a bit…insane. So every time AI carves even a little out of that, it’s not just a matter of saving time. It’s saving cold, hard cash.

Where the Savings Actually Come From

| Area of Impact | How AI Reduces Costs | Estimated Savings Impact |

|---|---|---|

| Administrative Tasks | Automation of paperwork | High |

| Hospital Operations | Optimized staffing & resources | Medium–High |

| Diagnostics | Early detection, fewer errors | High |

| Readmissions | Predictive risk monitoring | Medium |

These kinds of charts make it seem clean. Reality is a tad more complicated, as per usual.

The “Hidden” Savings Nobody Notices

The thing is, there’s another calculation that tends to get missed. Time.

When a physician has fewer notes to type, or less paperwork to deal with, that time doesn’t magically go away. It’s simply allocated elsewhere. More patients. Less burnout. Fewer errors due to fatigue.

Errors are costly. Not just in the literal sense, but in the figurative one, as well. A missed diagnosis or an avoidable complication can snowball rapidly.

A study by the NCBI on healthcare inefficiencies found that errors and inefficiencies alone cost billions of dollars per year. AI doesn’t wipe these out, but it moves in the right direction.

So… Is AI Actually Saving Money Yet?

The short answer is yes, but inconsistently.

Some hospitals are finding that it’s saving them money, in terms of cost reduction and workflow streamlining. Others are in the “it’s costing us more to implement than we’re saving” phase.

I think that might be the uncomfortable truth that nobody’s really willing to admit. AI isn’t a cost-cutting button that you flip. It’s going to take time, money, and a bit of patience.

But it doesn’t really put on a show when it is working. It’s not something you’re going to hear announced in a big fanfare. It’s just… slightly less wasted resources, slightly better decisions, and a system that feels a tad less strained.

Not the sort of thing that’s going to make the front page. But if you’re balancing the books for a hospital, that’s the sort of thing that can start to add up… significantly.

8. Who’s innovating in AI health care and who’s lagging?

Not all AI healthcare is being created equal. While some nations and organizations are running a marathon, others are still lacing up their shoes. Sometimes it’s not about being smart or even trying hard enough. Sometimes it’s about having a good infrastructure, favorable policies, or more money than others.

According to the Stanford AI Index Report, the US and China are leading the world in AI spending, including in healthcare, which is no surprise. But what is, is the fact that they are taking different approaches to getting there, one through private funding and the other through state sponsorship.

The Hare (What’s Really Taking Off)

So, where is AI in healthcare really taking off? There are a few places that you should keep your eye on:

- United States, plenty of startups and investment

- China, implementation at the speed of light and less red tape

- UK & parts of Europe, research galore but less deployment

Take the UK for instance. The National Health Service has been dipping its toes into AI-assisted diagnostics and screening for some time now. An NHS AI Lab report counted more than 30 pilot studies that were already underway. But pilots, as we all know, don’t always translate into full-blown projects. So, where does everyone stand?

| Region/Country | Strengths in AI Healthcare | Current Position |

|---|---|---|

| United States | Innovation, startups, funding | Leading |

| United Kingdom | Data integration (NHS), policy support | Strong |

| China | Scale, data volume, rapid deployment | Leading |

| Germany | Strong research, slower adoption | Catching up |

| India | Growing tech talent, limited infra | Emerging |

This chart is neat and clean, but the truth is a bit messier.

The Laggards (and their Excuses)

You could be tempted to label some of these countries as simply “late to the party.” I think that is a bit of an unfair assessment. There are some legitimate reasons why some of these countries are trailing.

- Disjointed healthcare systems

- Lack of access to quality data

- Uncertainty around regulations

- Budget constraints

Even in countries with robust budgets, the implementation of healthcare AI lags. For example, Europe has some of the most advanced research but the implementation tends to lag due to more stringent data protection rules. As this European Commission overview of eHealth highlights: “Data governance is at the same time an enabling factor and an obstacle for eHealth.” So, the devil really is in the details.

Companies Racing in the Background

While all this is going on at the national level, there is a land grab happening at the company level. The big boys are all playing in healthcare AI, including Google, Microsoft and Amazon. There are also countless startups tackling very specific problems that no one else is addressing.

The thing is, innovation is not always successful. Some pilots fizzle out and don’t go anywhere. A hospital may pilot a tool, love it, but be unable to adopt it. It is a bit like buying a shiny new toy but realizing it doesn’t fit in your life.

So, Who is Winning, Exactly?

Well, it depends on how you define winning. If it is a function of speed and size, then the United States and China are winning. If it is a function of measured progress, then the UK and other European countries are winning.

But I think the real distinction is not between countries, it is between those with health systems that can absorb AI and those that are still working on the fundamentals. And that is the uncomfortable truth of innovation, it is not just about building new and better things, it is about being prepared to absorb them.

9. Challenges in AI health care

In theory, AI in healthcare sounds amazing. Timely diagnoses, reduced expenses, improved care, the trifecta. Easy, right? Except it’s not. A McKinsey report on healthcare AI adoption states that although many healthcare organizations have developed some form of AI pilot program, fewer than 30% of them have successfully deployed and scaled AI across their organization. That difference, between piloting and deploying, is where the struggles sit.

Data Issues (It’s More Complicated Than Most Let On)

AI relies on data. Clean data, structured data, uniform data. Healthcare data? Not so much. Between different formats, missing information and the odd handwritten file, it’s a bit of a mess. And when the data you’re feeding in is problematic, the data you get back will be as well. Plain and simple.

An NCBI study on healthcare data quality states that the heterogeneity of medical data could lead to significant effects on AI algorithms’ performance. In other words, when we say, “The AI messed up,” in some cases, it could be that… the data was a mess to begin with.

Here’s a rundown of the key obstacles:

| Challenge | What It Looks Like in Practice | Impact Level |

|---|---|---|

| Data Quality Issues | Incomplete or inconsistent records | High |

| Integration Problems | Systems not talking to each other | High |

| Regulatory Complexity | Strict data/privacy laws | Medium–High |

| Trust & Adoption | Clinician skepticism | High |

You can’t see this on a graph, but it’s always in the mix. This one’s less about software, more about the human factor. And, frankly, it might be the toughest to address.

When an AI algorithm identifies something out of the ordinary, a doctor doesn’t simply accept it at face value. They pause. You can almost see it: They lean back in their chair, take another look, maybe call over a coworker and say, “Take a look at this. Does this seem right to you?”

That pause isn’t skepticism. That’s stewardship.

A JAMA study on clinician trust in AI found that many doctors approach AI with caution, particularly in high-stakes situations. Frankly, that sounds… comforting? You want the person making decisions about your healthcare to scrutinize information, not simply defer to the screen.

The Stakes Are High

Okay, here’s the part where things get serious.

When AI goes wrong in healthcare, it’s not like your iPhone freezing up or an app crashing in the middle of scrolling through it. The consequences can extend outward, to diagnoses, to treatments, to people’s lives. That’s a different level of accountability.

Unsurprisingly, things slow down. There’s more review. More oversight. More “let’s make sure this is right.” That can be maddening for those of us on the outside, as we wonder why isn’t this happening faster, but from the inside, it makes perfect sense.

And then there’s the uncomfortable question that nobody quite has an answer to: If something does go wrong, who’s liable? The AI developer who created it? The hospital that adopted it? The doctor who consulted it?

That’s not a straightforward question. The answer is, complicated.

So… How Do We Move Forward?

Almost certainly not by pushing full speed ahead. That rarely goes well in healthcare.

It’s more of the behind-the-scenes fixes. Better data. Interoperable systems. AI that can explain its recommendations instead of acting like a black box. And maybe most important, finding ways to help clinicians feel like they’re collaborating with AI, not second-guessing it at every turn.

None of that is terribly sexy. There aren’t a lot of splashy headlines to be found there.

But maybe that’s the real story. The biggest challenge facing AI in healthcare isn’t building it to be smarter, it’s making sure it works within a system that’s already stressed, already human and, if we’re being truthful, already a little brittle in some places.

10. Is AI in health care impacting patients yet?

Few patients are leaving the hospital thinking, “That AI made a huge difference for me today.” And that’s really the whole idea. Much of what’s happening is behind the scenes, and the effects are sometimes almost imperceptible.

- Reduced wait times.

- Quicker lab results.

- Fewer missed communications.

As HealthIT.gov explains in this overview, AI-enabled tools are already being used to optimize clinical workflow and care coordination in a range of healthcare settings. While patients may not always notice, they’re feeling the benefits in minor ways. A bit less time waiting. A few less delays. A shade less aggravation.

When It Gets Personal (And You Actually Notice)

Yet there are instances in which the role of AI becomes a tad more apparent. Earlier diagnosis is a good example. It’s often the difference between life and death, or at least life and severe complications. AI-assisted diagnostics has been shown to enhance the timely detection of conditions like cancer and heart disease, according to research published in Nature. Improved detection means improved prognosis. That’s not abstract. That’s personal. That’s one patient getting news a little sooner than he or she otherwise would have.

A Quick Look at Patient-Level Impact

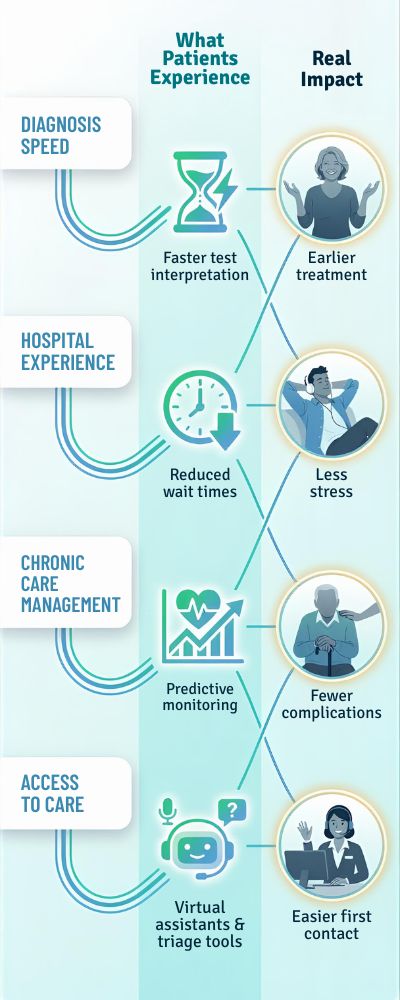

| Area of Care | What Patients Experience | Real Impact |

|---|---|---|

| Diagnosis Speed | Faster test interpretation | Earlier treatment |

| Hospital Experience | Reduced wait times | Less stress |

| Chronic Care Management | Predictive monitoring | Fewer complications |

| Access to Care | Virtual assistants & triage tools | Easier first contact |

Looks simple, doesn’t it? Reality is a tad messier.

But It’s Not Equal for Everyone

This is where it gets a little awkward. All patients aren’t reaping the same benefits.

Some hospitals are already employing AI. Others are just starting to implement fundamental digital solutions. The result? Your care can greatly depend on where you are, who you’re seeing, and even what’s wrong with you.

This NCBI study on digital health disparities shows how AI-supported care accessibility differs greatly between regions and communities.

So yes, AI is helping, but not in a completely fair, uniform manner. At least, not yet.

The Trust Question (From the Patient Side)

I find it fascinating that patients often don’t know when AI is being used, and if they do, the responses vary.

Some are interested and even positive. Others, well, not so much. And rightfully so. If you’re being diagnosed and/or treated, you want to feel like there’s a human involved, not just a computer in the background.

Trust requires time. Probably more time than the actual technology.

So… Is It Making a Difference?

Yes, it is. Just not in the loud, clear way most expected.

You can’t point to one definitive moment and say, “See? Look how different it is!” All you can see is a handful of subtle improvements, a bit quicker here, a tad sooner there, a slightly more pleasant patient experience overall.

Perhaps this is the real answer: AI is not drastically changing the world of healthcare for patients overnight. But it is moving the needle.

Slowly. Inconsistently. Yet still, significantly.

11. Future of AI health care

Most of the headlines about the future of AI in healthcare are extremes: AI either does everything, or it fails. The truth is likely somewhere in between. Not quite as glamorous, but still impactful.

A PwC report on AI’s economic benefits estimates that AI could add more than $15 trillion to the global economy by 2030, with a big chunk of that coming from healthcare. That’s a big number, but it doesn’t necessarily mean that hospitals are going to morph into Star Wars-esque medical wards.

In reality, we’re more likely to see incremental change. AI becoming part of more healthcare processes, gradually.

From Reactive to Predictive (And Maybe Preventive)

Today, much of healthcare is reactive: reacting to a condition once it appears. The next generation of care is going to be about predicting those conditions before they happen. And maybe even preventing them altogether.

AI algorithms are already being used to predict patient deterioration, the risk of disease, and hospital readmissions. In one Nature Digital Medicine study, predictive models detected patient deterioration hours ahead of traditional methods.

Take that idea out a few years, and you can start to see a trend: away from “treating disease,” and toward “preventing it in the first place.”

That’s the dream. It won’t be easy.

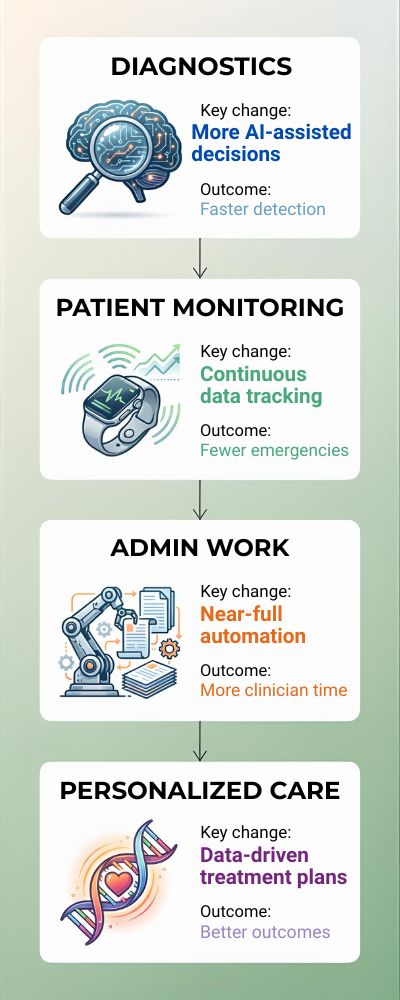

| Area | What’s Changing | Likely Outcome |

|---|---|---|

| Diagnostics | More AI-assisted decisions | Faster, earlier detection |

| Patient Monitoring | Continuous data tracking | Fewer emergency events |

| Admin Work | Near-full automation | More clinician time with patients |

| Personalized Care | Data-driven treatment plans | Better individual outcomes |

The Rise of “Background AI”

One thing that we don’t talk about enough (possibly because it’s boring) is the idea that AI in healthcare is going to become… invisible. Not more robots in the hallway. Not more screens. Just stuff that happens in the background. Paperwork that gets sorted, weird results that get flagged, connections between things that no one has time to connect on a busy day.

Kind of like electricity. You don’t walk into a room and think, “Wow. Electricity.” You just flip a switch and expect that it will work. And only if it doesn’t work do you realize how much you depend on it. I think that a lot of this is going to be like that. Less, “Look! AI!” and more, “Things seem to work better now.”

The Human Role Isn’t Going Anywhere

Then there’s the question that’s always floating in the air. What about the doctors? I don’t think they’re going away. In fact, I think their jobs are going to become more human, not less. More talking. More decision making. More navigating grey areas that aren’t clearly defined.

The WHO digital health report says that AI works best when it supports clinicians rather than replaces them. I think that’s right. Because no matter how good this stuff gets, healthcare isn’t just data. It’s humans. And humans are irrational and emotional and occasionally contradictory… you can’t reduce that to an algorithm. Not in any meaningful way, at any rate.

What Should We Expect?

Probably not a huge pivot. Probably just a drift. Some things will work great and stick. Other things will get a lot of attention (possibly some media coverage) and then quietly go away when they don’t work so great. That happens a lot more than people like to admit. If I had to imagine it, it wouldn’t be a big,

Hollywood moment where the machines suddenly get smart and change everything. It would be little things adding up over time. Systems getting a little better. Decisions getting a little faster. Work getting a little easier. None of it worth writing a headline about on its own.

But adding up to something over time. It feels less like a wave that washes over us, and more like the tide coming in. You don’t notice it from one second to the next… but over time, everything changes.

12. Will AI replace physicians?

This is a popular question. I hear it at every medical conference I attend. I see it in the headlines. I get it from my friends at dinner parties. “So… are doctors going to get automated?”

I understand the question. AI has made tremendous progress. It can interpret imaging. It can make diagnoses. It can even pass some medical board exams. A recent paper published in JAMA found that AI performed at or above the passing level across a range of medical knowledge tasks. Pretty scary, right?

But acing a test is one thing. Taking care of a real-life patient is another.

The Limitations of AI

AI is great at many things. It can recognize patterns, process information, and find outliers. If you ask it to do something discrete and give it the data it needs, it will probably do better than most humans.

But medicine is not a discrete task. It’s a mess. Patients don’t follow a script. Their symptoms blur together. Their stories don’t always make sense. They feel anxious and scared.

According to a recent analysis from McKinsey, AI can handle about 10 to 20% of physician tasks. These are largely administrative and routine. This still leaves 80 to 90% of care that requires a human touch.

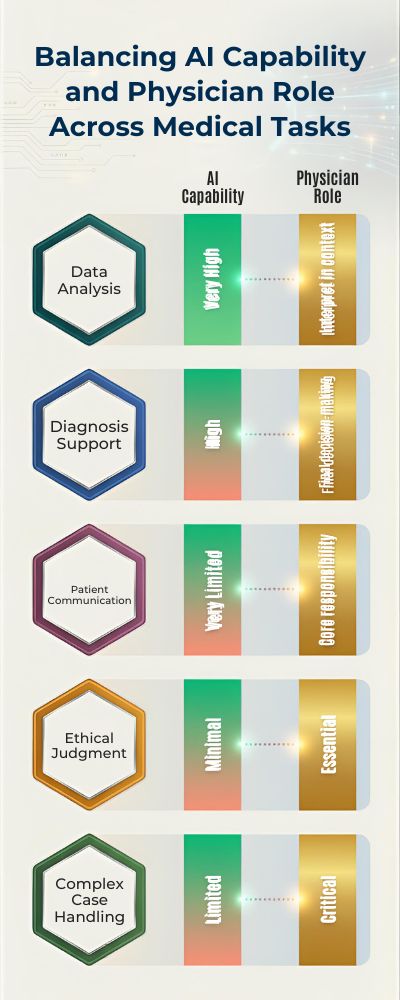

| Task Type | AI Capability | Physician Role |

|---|---|---|

| Data Analysis | Very High | Interpret in context |

| Diagnosis Support | High | Final decision-making |

| Patient Communication | Very Limited | Core responsibility |

| Ethical Judgment | Minimal | Essential |

| Complex Case Handling | Limited | Critical |

You see the distinction clearly in a table. In practice, it gets a bit fuzzy.

The Part AI Struggles With (And Will for the Foreseeable Future)

Now try explaining that to a patient. Or helping them decide on a course of treatment. Or… just being present with them when the diagnosis is bad news.

AI can’t really do that. At least not in a way that feels authentic.

A JAMA Network Open study found that patients tend to trust human physicians more when it comes to risky or uncertain decisions. That’s not just a matter of information, it’s a matter of empathy.

Healthcare remains an essentially human endeavor.

So… Replace or Reshape?

If I had to choose, I’d go with reshape.

AI is already shifting the work physicians do, less paperwork, better support with diagnoses, possibly even enhanced decision-making when things get tricky. But outright replacing them? I don’t see that in the near future.

It’s more like having a highly skilled helper. Someone who’s really fast. Sometimes frighteningly smart. Sometimes infuriating. But still requires oversight.

Perhaps that’s the future we’re evolving toward. Not doctors or AI, but doctors and AI muddling through.

13. Wearable Devices Combined with AI Are Changing Preventive Care

Smartwatches and health trackers are doing more than counting steps. With AI, they can detect irregular heart rhythms and other warning signs. This brings healthcare into everyday life. Prevention is becoming more proactive.

14. AI Can Analyze Thousands of Medical Records in Seconds

What would take humans days or weeks can now be done instantly. AI can scan patient histories, lab results, and notes quickly. This helps doctors make more informed decisions. It’s like having a super-fast research assistant.

15. Smaller Clinics Are Slower to Adopt AI Than Large Hospitals

Big hospitals tend to lead when it comes to new tech. Smaller clinics often face budget and resource challenges. This creates a gap in adoption. Over time, more affordable tools may close that gap.

16. AI Can Help Personalize Treatment Plans for Individual Patients

No two patients are exactly the same. AI can analyze genetic data, lifestyle, and medical history. This helps tailor treatments more precisely. Personalized medicine is becoming more realistic.

17. Many Doctors Are Still Cautious About Fully Trusting AI

Even with all the progress, trust is still an issue. Doctors want to understand how AI reaches its conclusions. Transparency matters in healthcare decisions. Adoption is growing, but cautiously.

18. AI Is Being Used to Manage Hospital Bed Availability More Efficiently

Hospitals often struggle with capacity planning. AI can predict patient flow and optimize bed usage. This improves overall efficiency. It’s another example of AI working behind the scenes.

19. AI Can Help Detect Mental Health Issues Through Speech and Behavior Patterns

Some tools analyze voice tone, word choice, and behavior data. They can identify early signs of depression or anxiety. This opens new possibilities for early intervention. Mental health care is becoming more data-driven.

20. AI Is Helping Reduce Waiting Times in Emergency Departments

By prioritizing patients based on urgency, AI improves triage systems. This ensures critical cases are handled faster. It also streamlines patient flow. Shorter waits improve overall patient experience.

21. Data Privacy Remains One of the Biggest Concerns in AI Healthcare

Healthcare data is highly sensitive. Many people worry about how their data is used. Regulations and safeguards are evolving to address this. Trust will be key for wider adoption.

22. AI Can Assist Surgeons During Procedures

Some systems provide real-time guidance during surgery. They analyze data and highlight critical areas. This supports precision and reduces risk. It’s like having a digital assistant in the operating room.

23. AI Adoption Is Growing Faster in Developed Countries

Regions with strong healthcare infrastructure are leading adoption. Investment, data availability, and policy support play a role. Developing regions are catching up slowly. Access remains uneven globally.

24. AI Can Help Identify Population Health Trends

Public health agencies use AI to track disease patterns. It can spot outbreaks or trends early. This helps guide policy and response. It’s especially useful during global health crises.

25. AI Tools Are Becoming More User-Friendly for Medical Staff

Early systems were complex and hard to use. Newer tools focus on simplicity and integration. This makes adoption easier for healthcare workers. Usability is improving quickly.

26. Patients Are Becoming More Open to AI in Their Care

At first, many people were skeptical. Now, acceptance is growing as benefits become clearer. Faster service and better outcomes build trust. Patients are gradually warming up to AI involvement.

Conclusion

At the end of the day, there’s no clear answer here, and that might be the answer. AI is no panacea for our health care woes, but neither is it pure fantasy. It’s something in between, something that’s going to quietly make things a bit better, then a bit better again, and a bit better once more.

Some of that will be exciting; some of that will be boring; some of that will be painful. But maybe the future of medicine isn’t about AI being spectacular. Maybe it’s about AI being ordinary, a subtle enabler that frees up care providers to get on with what they do best. Not flawless. Not extraordinary. Just… better.