The internet is no longer just a medium for information. It is increasingly a tool for creating reality. And deepfakes are a big part of this trend.

Table of Contents

When we first encountered deepfakes, they seemed somewhat intriguing, albeit a bit creepy. But now, we’re seeing deepfakes in phishing attacks, political campaigns, social media, and even in daily interactions, and it is all happening extremely fast. This is not a scare piece, nor is it an attempt to lull you into complacency.

The statistics are undeniable, with skyrocketing rates of attacks, actual dollar losses, demographic shifts in victims, and an uncertain future. So if you have any interest in understanding the scope of the deepfake threat and its trajectory, you’re reading the right article. Let’s break it down.

1. Deepfake explosion: how fast is synthetic media growing?

The growth curve nobody saw coming

A few years ago, “deepfake” sounded like some niche Reddit experiment. Now? It’s basically the wild west of AI content. And the numbers back that up in a way that’s… honestly a bit unsettling.

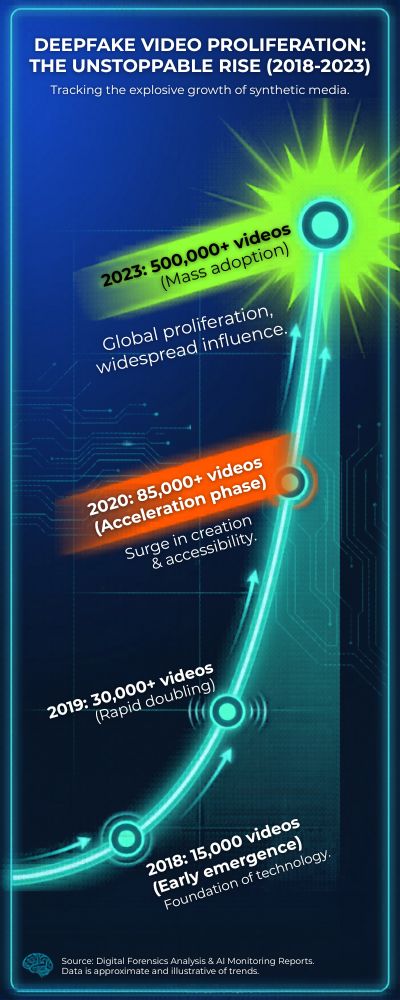

According to Sensity AI, the number of deepfake videos online doubled roughly every 6–12 months between 2019 and 2023, with over 500,000+ deepfake videos circulating by 2023. That’s not linear growth; it’s exponential, the kind that sneaks up on you and then suddenly dominates everything.

And here’s where it gets more intense. A report by Sumsub found that deepfake fraud increased by over 700% globally between 2022 and 2023. Seven hundred percent. That’s not growth; that’s a surge.

You kind of have to pause and ask: are we even tracking this properly anymore? Or are we already behind?

| Year | Estimated Deepfake Count | Growth Trend |

|---|---|---|

| 2018 | ~15,000 videos | Early emergence |

| 2019 | ~30,000+ videos | Rapid doubling |

| 2020 | ~85,000+ videos | Acceleration phase |

| 2023 | 500,000+ videos | Mass adoption |

Why is it expanding so rapidly? (The answer: not because of the tech) It’s easy to attribute it to AI algorithms, okay, face-swapping and voice cloning tools have become surprisingly powerful. But the reality? Democratization. You don’t need a doctorate degree anymore. You need a computer, some time, and a YouTube tutorial.

Marketplaces and open source tools have democratized it to a level where creating a sophisticated deepfake is not a unique skill of an elite cyber-villain anymore. A report cited by Europol also states that the prices of high-quality deepfake productions have decreased while the quality has increased. Classic technology adage: it’s getting cheaper, faster, better. Usually not a good combination when it all happens at once.

The inconvenient truth: most of it isn’t benign

This is the part nobody likes to discuss. A large majority of deepfakes aren’t being used to experiment with fun new ideas or for movie special effects. Historically, according to research by Deeptrace Labs, more than 90% of all deepfake content has been non-consensual pornography.

If this single statistic doesn’t explain why we shouldn’t view deepfakes as a ‘cool new technology’ I don’t know what will. But financial fraud is quickly catching up. Voice cloning and CEO fraud, concepts that used to sound like science fiction, are now happening in real-life companies.

So… where does that leave us? If I’m being completely honest, the rate of growth itself isn’t the most concerning part. Technology always advances rapidly. What’s concerning is how quietly it scaled before most of us even noticed.

We’re at this in-between phase where deepfakes are all around us, yet detection, legislation and consumer awareness hasn’t yet kept pace with it. And judging by the last few years, the growth curve isn’t going to slow down anytime soon. It’s just heating up.

2. Statistics: how many deepfakes exist on the internet today?

So… how many are there, exactly? Well, this is the part where it gets a bit fuzzy. You’d expect there to be a very clear, very precise answer. There isn’t. Deepfakes aren’t exactly filling out a census form going, “Hi, I’m a deepfake.”

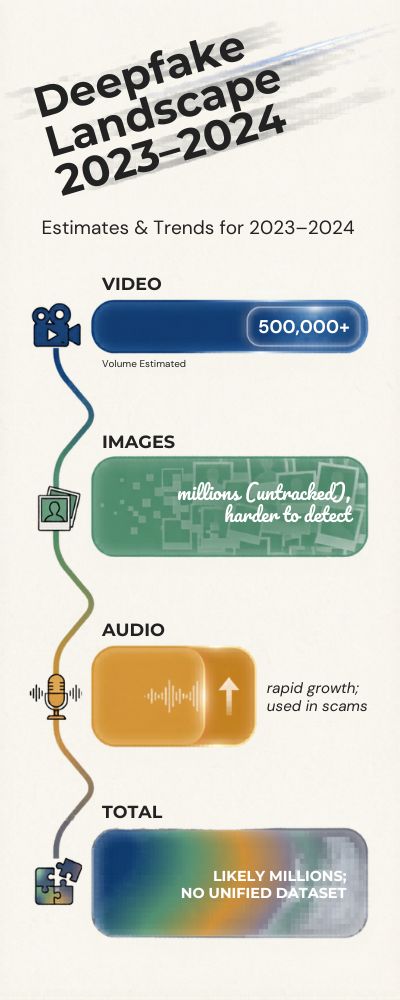

That said, there are some very good estimates that can give you a broad idea. As of 2023, according to Sensity AI, there were over 500,000 deepfake videos circulating around the web. Let me be clear: videos. Not deepfake images. Not deepfake audio. Just… videos. Also, this figure is probably wrong by now.

Estimated deepfake volume (by type)

| Type of Deepfake | Estimated Volume (2023–2024) | Notes |

|---|---|---|

| Video | 500,000+ | Most tracked category |

| Images | Millions (untracked) | Harder to detect/measure |

| Audio (voice) | Rapid growth | Used in scams |

| Total (all forms) | Likely in the millions | No unified dataset |

The secret multiplier effect

Now this is where things start to get a little crazy. The thing is, a deepfake doesn’t really count as “one piece of content.” It’s more like a piece of gossip, it gets out, it moves around, it mutates, it multiplies.

You’ve seen it with memes. Same meme, different captions, endless variations. Deepfakes are exactly the same… with higher stakes. Someone makes a video, someone else rerecords the audio, someone else subtitles it into a different language, someone else reuploads it with a different description, and then suddenly it’s everywhere, wearing different clothes.

What was originally one file somehow multiplies into tens, sometimes hundreds of variants in existence. It’s not even co-ordinated, most of the time. It just… happens.

The researchers quoted in the Deeptrace Labs report mention this multiplier effect, and to be honest, it makes the whole exercise of counting deepfakes feel a bit like trying to count the raindrops in a rainstorm. You can try, but you’re never going to get them all.

Which kind of inverts the question, in a way. It’s not so much “how many are there?” any more, but “how many are in circulation right now, across all the platforms, and all the chat groups, and all the forums, and all the group chats?” And, well… that’s a very different question. With a very different answer. That is to say: we don’t really know.

Are we underestimating this? Almost certainly. Probably more than we think

I don’t like being alarmist about these things, but I’m not sure there’s any other way to put this, we’re underestimating. And probably by orders of magnitude.

A lot of deepfakes never make it to public platforms. They circulate in private Facebook groups, or on encrypted chat apps, or just among small communities of interest. And then there are AI-generated still images, which are even harder to keep track of. So the numbers we can see are probably just the tip of the iceberg.

When people say “hundreds of thousands”, that sounds like a big number. But in reality? We’re probably already into the millions, when you count all this in.

And then there’s the mildly infuriating fact that the tools to make deepfakes just keep getting faster, cheaper, and easier… so even if someone managed to count everything today (which, let’s be clear, they won’t), that number would be out of date almost immediately.

So what does actually matter here?

At some point, the question of “how many” just stops mattering.

What matters is the trend. And right now? The trend is upwards. And fast.

This isn’t a problem that’s contained any more. It’s not something sitting in a dark corner of the internet, waiting to be tidied away. It’s out in the wild. It’s spreading. It’s evolving. It’s adapting. It’s kind of like a virus, except instead of a virus, it’s information, which sounds a bit science fiction, but also feels kind of true?

3. The demographics of deepfake victims: by gender, industry, and region

Who’s being targeted?

You might imagine that deepfakes are used to target celebrities and politicians. While it’s mostly been the case in the past, that’s no longer the case.

The harsh truth is that the base of those targeted has expanded quickly. Employees, journalists, and even students are now part of the landscape. This trend is illustrated by the numbers.

According to a report by Deeptrace Labs, more than 90% of all the deepfake content ever created targeted women, primarily in non-consensual pornography. That single statistic tells us much about the nature of this technology’s use and misuse.

This is not just a technology problem. This is a people problem.

Deepfake victims by gender.

| Gender | Estimated Share of Victims | Context |

|---|---|---|

| Women | ~90–96% | Mostly explicit deepfakes |

| Men | ~4–10% | Often political or fraud-related |

| Non-binary | Limited data | Likely underreported |

Industry matters more than you’d think

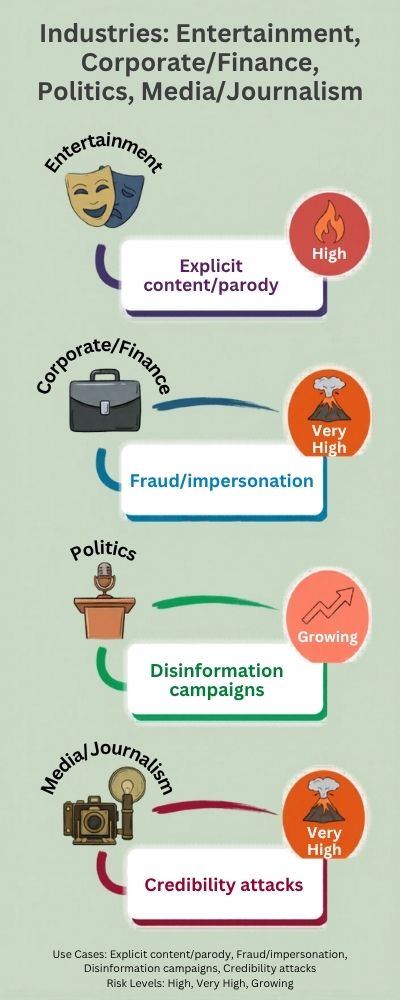

Turns out, it’s not all about you. Various industries are targeted for various reasons.

- Entertainment & influencers → visibility, large image databases

- Corporate executives → financial scams, impersonation

- Politics & journalism → misinformation, reputation attacks

Europol reports a significant increase in deepfake-enabled scams against businesses, often impersonating CEOs or senior staff using voice cloning. And that one is another story. Because it’s no longer about reputation. It’s about money. Jobs. Trust.

Deepfake targets by industry

| Industry | Common Use Case | Risk Level |

|---|---|---|

| Entertainment | Explicit content, parody | High |

| Corporate/Finance | Fraud, impersonation | Very High |

| Politics | Disinformation campaigns | High |

| Media/Journalism | Credibility attacks | Growing |

Location: where is this happening the most?

You would think that the distribution of deepfakes would be contained to a few areas, but really it’s quite global. However there are a few hotspots.

According to reports by Sumsub, deepfake fraud cases have notably risen in places such as North America, Europe, and Asia where digital banking and remote identity verification are more prominent.

This is logical, the more digital a system the easier it is to manipulate identity.

| Region | Key Trend |

|---|---|

| North America | Rise in financial fraud cases |

| Europe | Regulatory response increasing |

| Asia-Pacific | Rapid growth in synthetic media |

| Global | Cross-border misuse expanding |

The part that sticks with you

The reason this subject is tough isn’t just the statistics, it’s what the statistics represent.

It’s one thing to see “90% of victims are women.” It’s another to stop and consider what that looks like in practice. A loss of agency. A loss of reputation. A loss of emotional well-being. Sometimes, all three.

And if there’s one thing to take away from this article, it’s this: deepfakes don’t discriminate. They prey on opportunity. On exposure. On weakness.

And if we’re being honest, that’s the real reason they’re so hard to combat.

4. Percentage of deepfakes used for fraud, scams and misinformation

Not all deepfakes are created equal

You might think of deepfakes as a tool for political control or viral fake speeches. But that’s only half the truth. In reality, if you take a step back, the most common application historically has been something very different.

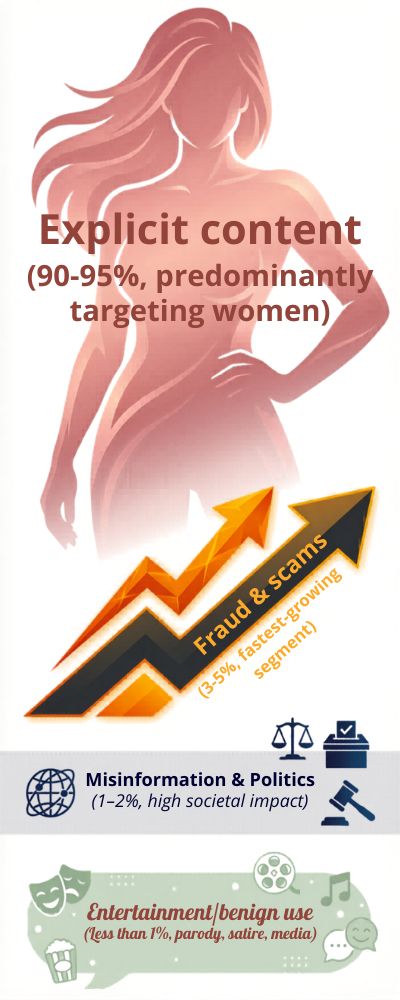

A report by Deeptrace Labs concluded that 90 to 95% of the deepfake content circulating online has been explicit and non-consensual in nature. This means there’s a smaller (albeit increasing) share left over for things like fraud, scams, and misinformation.

The thing is: even though that share is smaller, the damage tends to be far more severe.

Estimated breakdown of deepfake usage

| Category | Estimated Share | Notes |

|---|---|---|

| Explicit content | 90–95% | Predominantly targeting women |

| Fraud & scams | 3–5% | Fastest-growing segment |

| Misinformation & politics | 1–2% | High societal impact |

| Entertainment/benign use | <1% | Parody, satire, media |

A false proportion

Deceptive deepfakes are a minuscule proportion of all deepfakes. They are also the most destructive.

Fraud

Proportion of deepfakes: a few percent

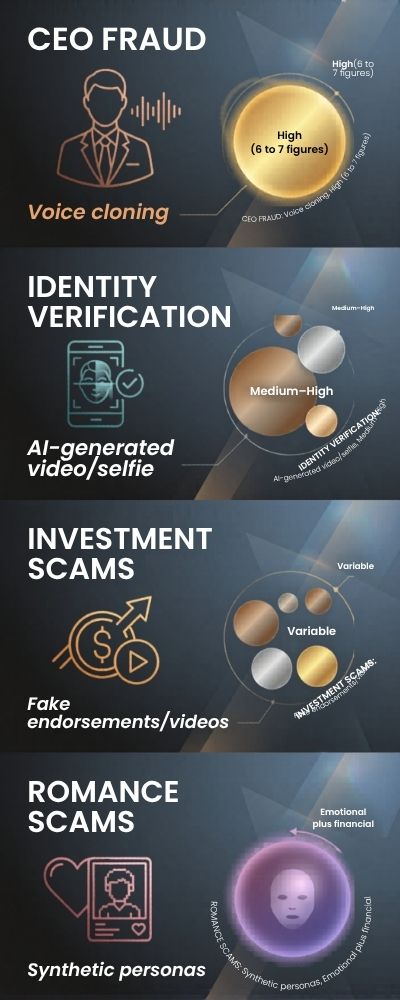

Something doesn’t feel right here. The proportion of deepfakes that are used for fraud is in the low single digits, but their impact is enormous. According to Sumsub, deepfake fraud cases increased by over 700% globally between 2022 and 2023. This disproportionate growth implies that fraud is not a niche application of deepfakes; it’s a core use case.

We’re talking voice phishing, spoofed video calls from “CEOs”, circumvention of identity verification services. You don’t see them as much as the memes, but they’re arguably worse. You may not notice, but someone is falling victim.

Misinformation

Proportion of deepfakes: in the single digits

But what about misinformation? Somewhat surprisingly, deepfakes used for misinformation remain a relatively small proportion of all deepfakes. Europol has reported that the dissemination of deepfakes for political manipulation and disinformation is increasing, particularly during election periods, but this remains a small proportion of all deepfakes.

However… a single deepfake has the potential to deceive millions of people. So in a way, the proportion is not even that relevant. A lit match may be small, but all it needs is the right fuel.

| Year | Fraud Growth | Misinformation Trend |

|---|---|---|

| 2020 | Low | Emerging |

| 2022 | Moderate | Increasing |

| 2023 | +700% spike | Significant concern |

What does it all mean?

When measured as a percentage, it’s clear that fraud/misinformation isn’t the biggest problem. But that’s a bit of a cop out.

The trend is changing. Quickly.

Adult content still comprises the bulk of the data, but fraud/scams are growing. Misinformation is the riskiest. Low frequency, high impact.

Perhaps the more relevant question isn’t “how much of this is bad?” and more of “which of these is getting worse over time?”

The answer seems fairly obvious.

5. Annual deepfake scam losses: how much money is being swindled?

The cost no one wants to talk about

Nothing brings a trend to the forefront like financial loss, does it? You can dismiss it, argue about it, or scroll past it, but when cash starts vanishing, suddenly people listen.

Deepfake scams have slowly reached that tipping point.

The FBI Internet Crime Complaint Center (IC3) reports that companies worldwide are losing billions of dollars each year to impersonation scams, much of which now utilizes AI-generated audio and video. Technically, not all of that is “deepfake,” but the distinction is increasingly vague.

And frankly, that vagueness is part of the issue.

Estimated financial losses attributed to deepfake-enabled scams

| Year | Estimated Losses | Context |

|---|---|---|

| 2019 | ~$1–2 billion | Early AI-assisted fraud cases |

| 2021 | ~$5+ billion | Growth in business email compromise |

| 2023 | $10+ billion | AI-enhanced impersonation rising |

Real-world examples that make this sting more

Example is one thing, but specific examples seem to leave an impression. There was a highly publicized story of hackers using AI-voice cloning to impersonate a CEO and defraud a company out of $243,000, written about in The Wall Street Journal. Not millions. Not billions.

A single phone call, convincing enough to suspend disbelief. And that’s the real frightening aspect here: these attacks don’t have to be about volume, they can just be about plausibility. You don’t need to convince everyone. You just need to convince one person on an off day.

Why the losses are growing so rapidly

It’s not just that the number of attacks are growing, it’s that they’re evolving. According to reports by Sumsub, deepfake fraud cases grew by more than 700% from 2022 to 2023. Whenever we see that kind of growth, it can only mean one thing: the bad guys have found something that works.

And deepfakes? They reduce friction. They make the attacks feel personal. Urgent. Real. You can delete an email. A phone call from your “boss” telling you to take immediate action? That’s a different story altogether.

Common deepfake scam types and impact

| Scam Type | Method Used | Financial Impact |

|---|---|---|

| CEO Fraud | Voice cloning | High (6–7 figures) |

| Identity Verification | AI-generated video/selfie | Medium–High |

| Investment Scams | Fake endorsements/videos | Variable |

| Romance Scams | Synthetic personas | Emotional + financial |

So… how much are we really losing?

The honest answer is we don’t really know. At least not yet. There are too many unreported incidents. Too many scams lumped under more general types of fraud.

But, taken as a whole, we can say this much: deepfake scams are likely already adding to the billions of dollars being lost globally each year. And the trend is increasing.

And now here’s the scary part. This is just the beginning.

The tech is getting better. The barriers are falling away. The scams are getting more convincing.

Which may mean the real question here isn’t “how much are we losing now?” but “how much are we going to lose next?”

6. Election interference and politics: the deepfake statistics that are keeping governments up at night

It only takes one convincing fake

There’s always been spin in politics, half-truths, and… “alternative facts” But deepfakes? That’s a whole different story.

Now you’re not just questioning what you’re told, you’re questioning what you’re seeing and hearing.

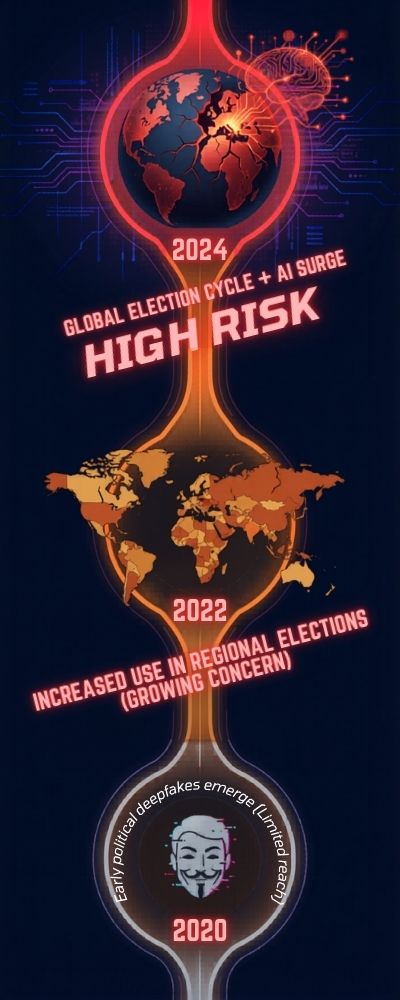

The World Economic Forum considers AI-generated misinformation, including deepfakes, one of the biggest short-term global risks, particularly in relation to elections. That’s saying something. Governments aren’t just a little worried about this, they’re sweating.

And let’s be honest, who can blame them?

How often are we seeing deepfakes in politics?

| Year | Notable Trend | Impact |

|---|---|---|

| 2020 | Early political deepfakes emerge | Limited reach |

| 2022 | Increased use in regional elections | Growing concern |

| 2024 | Global election cycle + AI surge | High risk |

Low volume, high damage

The weird thing is. Political deepfakes account for a tiny portion of deepfake content.

But the impact? Entirely out of proportion.

This Europol report states that a single well-timed deepfake can impact public opinion, sway an election, or undermine trust in institutions.

Pause for a moment. One video. One clip, released at the wrong (or right) moment. That’s all it takes to sow a seed of doubt.

And once that seed is planted, it’s hard to reverse.

Why elections are especially vulnerable

Elections are all about timing, trust, and emotion. Deepfakes tick all three boxes.

- Timing: fake content is released days or hours before voting

- Trust: credible imagery makes people question what’s real and what’s not

- Emotion: outrage travels way faster than the truth

This Brookings Institution article says voters can’t always tell what’s real and what’s synthetic, especially if the content confirms their beliefs.

Let’s be real. That’s human nature. We tend to believe what we want to believe, not necessarily what we should believe.

Public perception vs reality

| Factor | Observation |

|---|---|

| Ability to detect deepfakes | Generally low among public |

| Trust in video evidence | Still relatively high |

| Speed of misinformation spread | Faster than corrections |

The deeper issue isn’t disinformation

We don’t talk about this enough: deepfakes don’t just spread disinformation, they can also disinformation-ize accurate information. There’s even a term for it, “the liar’s dividend,” which is what happens when politicians can dismiss authentic footage as AI-manipulated. Truth itself can be discounted. That makes any policymaker nervous.

What does this mean for us? If we step back, the problem here isn’t technological. It’s about trust. And trust is a brittle thing: once it breaks, it doesn’t heal easily. Various governments are scrambling to regulate the technology, build detection tools, and run public education campaigns, but it feels like they’re already behind the curve.

And maybe that’s what sticks with you. Not that deepfakes are here, but that they arrived quicker than our defenses. It’s enough to make you quietly, slightly uncomfortably, wonder if we’re prepared for the next elections.

7. Detection rates for deepfakes: how accurate are AI deepfake detection tools in 2025?

So… can we detect a deepfake?

This is a question I come back to. I think I understand why, if it’s easy to detect, maybe this whole thing feels a little more manageable.

The truth is… it’s complicated. It’s hard, but tools are getting pretty good at detecting deepfakes, until they’re not.

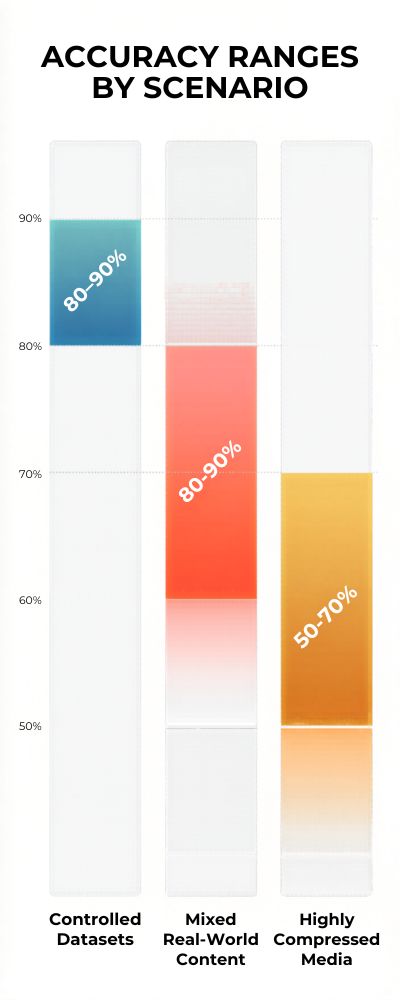

A recent study from Facebook’s Deepfake Detection Challenge (DFDC) found that the winning models had an accuracy of 65 to 90% on the challenge dataset. This seems pretty good, but the thing to note here is that the datasets were clean.

In reality, things get a lot murkier.

Detection accuracy: lab vs real world

| Scenario | Accuracy Range |

|---|---|

| Controlled datasets | 80–90% |

| Mixed real-world content | 60–80% |

| Highly compressed media | 50–70% |

The arms race that cannot be paused

Detection improves, generation improves. The cat-and-mouse game continues, but this time, both the cat and mouse are AI. A study by NIST found that most detection models are not robust against minor perturbations like compression, resizing, filtering, etc. of the deepfakes.

These are the things that commonly happen to a video when shared online. So, a good detector will have low accuracy once the video has gone through a few cycles of reuploads.

False positives and why they are more important than you think

We have been looking at the problem from one angle so far. The other aspect of the problem is false positives. False positives refer to incorrectly classifying a genuine video as a deepfake. Now that would be problematic, wouldn’t it? If a real video of a person were to be labeled a deepfake.

The person in question would lose credibility, there will be a lot of confusion, and mistrust would spread. Brookings Institution mentions that false positives remain a problem, particularly in political deepfakes. So, now we have two issues. We cannot detect them all and we cannot trust all the detection models.

| Weakness | Why it matters |

|---|---|

| Compression artifacts | Reduces detection accuracy |

| New AI models | Outpace existing detectors |

| Cross-platform sharing | Alters original media quality |

| Adversarial tweaks | Designed to fool detection tools |

So… are we winning or losing? To be frank, I’m not sure that we’re really doing either. The detection tools are improving, no doubt. Some of them are excellent. But they’re not infallible, and that’s the bit I think people are forgetting. This isn’t a solved problem, it’s an arms race.

If you’re holding out for a world where a tool can say this is “real” or “fake” with 100% confidence… we’re not there yet. And we may not be for some time. That leaves us in the mildly uncomfortable place where tech can help… but human discernment has a far more prominent part to play than we would like to admit.

8. Where are all the deepfakes? Social media breakdown

They’re not where you expect them

You might assume that deepfakes are most commonly shared on large and shiny platforms like Instagram or YouTube, but you’d be missing out on a whole chunk of them.

These things also thrive in the darker alleyways of the internet; in forums, smaller platforms, private groups. They might not be front-page content, but they’re abundant nonetheless.

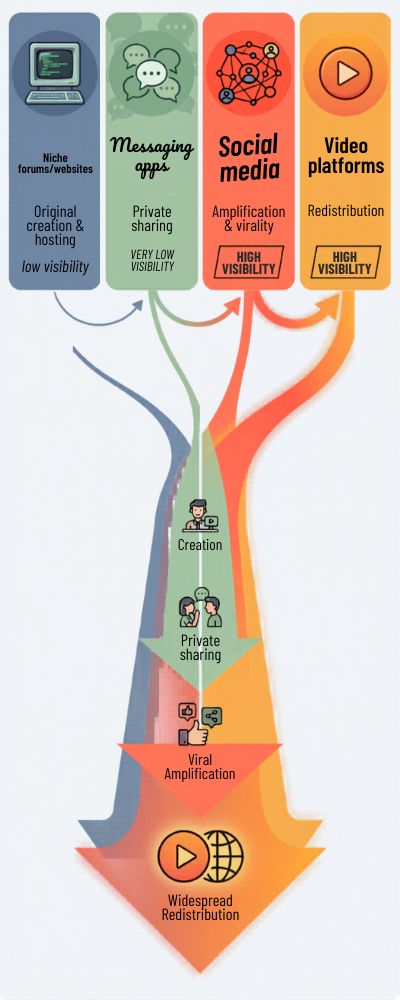

According to research by Sensity AI, a large percentage of deepfake content is first shared on niche websites and forums, before being shared on larger social media platforms. What you see on the front page is often the second or third sharing of the content.

It’s like a rumor that starts in one group chat, before spreading like wildfire.

| Platform Type | Role in Deepfake Spread | Visibility |

|---|---|---|

| Niche forums/websites | Original creation & hosting | Low |

| Messaging apps | Private sharing | Very low |

| Social media | Amplification & virality | High |

| Video platforms | Redistribution | High |

Platforms like TikTok, X (formerly Twitter), and Reddit are important amplifiers. If a deepfake makes it on those platforms, it can rack up views fast before it is even challenged.

The World Economic Forum says that AI-generated misinformation spreads faster and reaches wider audiences on social platforms than traditional content, thanks to algorithmic amplification. No surprise there. Algorithms reward engagement, and deepfakes are designed to engage.

| Platform | Typical Use Case | Risk Level |

|---|---|---|

| TikTok | Viral short-form content | High |

| X (Twitter) | News & political discourse | High |

| Community-driven sharing | Medium–High | |

| YouTube | Longer-form distribution | Medium |

The hidden layer: private sharing

This is where we don’t pay enough attention. A recent Europol report says that increasingly, deepfakes are being shared via private messaging apps and encrypted channels.

That means many deepfakes don’t actually go “viral.” They just spread through networks. And that’s perhaps even more difficult to handle. You can’t moderate what you can’t see.

So where are they? Everywhere… just not always out in the open. Some are in public feeds, trying to get as many clicks as possible. Others are in private channels, passed quietly from person to person.

And perhaps that’s the strangest thing about deepfakes. They don’t need a platform. They roam. They move to wherever they find attention… or weakness. So perhaps the real question shouldn’t be “where are they?” It should be “where haven’t they been yet?”

9. How to make a deepfake: tool and cost accessibility stats

It’s easier than you think

I think there’s this idea circulating that you need to be some kind of super hacker to make a deepfake. That’s what you used to need. Not anymore.

Now? The barrier to entry has fallen… significantly.

As Europol writes, “Deepfake creation tools have become more accessible, requiring low technical skills in many cases.” In other words, you don’t need to be an expert. You just need time and curiosity. And maybe a decent internet connection.

Deepfake creation: then vs now.

| Factor | 2018–2019 | 2024–2025 |

|---|---|---|

| Skill required | Advanced (coding needed) | Beginner–Intermediate |

| Time to create | Days–weeks | Minutes–hours |

| Cost | High | Low or free |

| Tools availability | Limited | Widely available |

What tools are people actually using?

Well, this is where things get a little uncomfortable. Not because the tools are bad. They’re just really accessible.

There are open-source tools, mobile apps, browser-based tools that allow users to swap faces or clone voices with a few clicks. Some are meant for entertainment. But they can be adapted.

A report cited by Sensity AI said the emergence of user-friendly deepfake tools has been one of the key factors in synthetic media gaining traction at such a rapid pace.

And that makes sense. Once something becomes easy, more people do it. That’s the internet.

Cost breakdown of creating a deepfake

| Method | Estimated Cost | Notes |

|---|---|---|

| Free apps/tools | $0 | Limited control, fast results |

| Open-source software | $0–$50 | Requires some setup |

| Paid AI platforms | $10–$100/month | Higher quality outputs |

| Professional services | $500+ | Custom, high-end production |

Time commitment

less than you think. This is something I didn’t expect. The thing about needing hours of footage and weeks of processing time is no longer true.

There are tools that will get you a decent result in less than an hour, depending on the length of the video. Voice impersonation? In some cases, you need just a few minutes of audio. That’s… a little creepy when you think about it.

So what’s the main lesson? It’s not that deepfakes are a thing, it’s that they’re democratized. When something is affordable, fast, and readily available, that’s a potent (and sometimes dangerous) mix. It reduces the barrier for everyone, not just those who want to use it for good.

And perhaps that’s what you should pause and reflect on for a minute. Technology didn’t just advance, it also proliferated. Which means the deepfake question isn’t “can people make deepfakes?” anymore. It’s “how many people have access to it?”

10. The future of deepfakes: 2030 and beyond (predictions and expert data)

This isn’t slowing down… it’s just getting started

If the last five years felt fast, the next five? Probably faster. That’s the general mood across most reports I’ve read, and honestly, it doesn’t feel like an overstatement.

The World Economic Forum claims that AI-generated misinformation will be one of the largest global risks in the coming years, as generative models improve and proliferate.

That’s the short-term view. By 2030, things look a lot different.

📊 Projected growth of deepfakes into 2030

| Metric | 2023 Estimate | 2030 Projection |

|---|---|---|

| Total deepfakes | 500,000+ (videos) | Millions (all formats) |

| Fraud incidents | Rapid growth | Mainstream threat |

| Detection accuracy | 60–90% | Improved, but still imperfect |

| Creation time | Minutes–hours | Near real-time |

Deepfakes will get harder to detect

Something that I just can’t stop thinking about is this. Deepfakes are cool…but at the moment, you can kind of tell when they’re not real. Shaky lip syncing, bad lighting, an eerie feeling that something is off.

Theoretically, that’s not going to be a problem for much longer. Experts interviewed by the Brookings Institution believe that the next generation of deepfakes may be all but impossible to distinguish from reality, particularly as the technology improves in real time rendering and customization. What happens when “the camera never lies” stops being true?

Future applications aren’t all bad

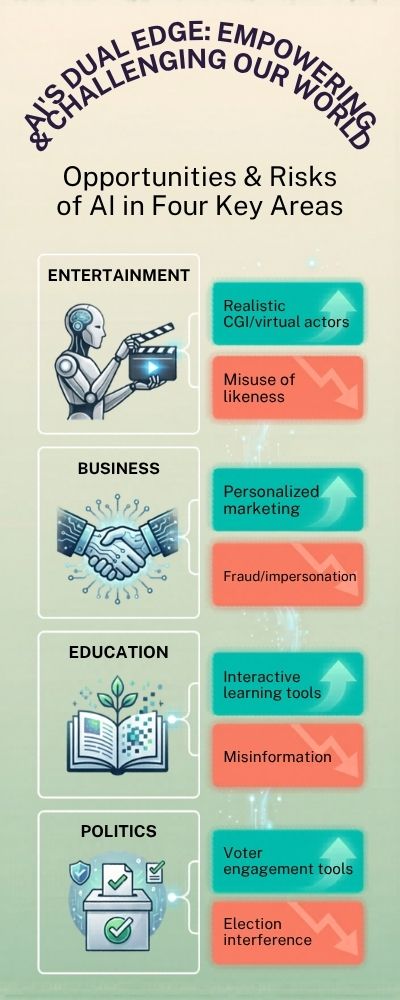

We’ve gone through some of the bad stuff, but it isn’t all bad. In fact, there are plenty of potential uses for deepfake technology (which is itself a subset of synthetic media, but whatever). Film and movie production. Education. Assistive technologies.

Personalised media. McKinsey reckon that generative AI, which includes synthetic media, could add trillions of dollars to the economy in the coming years, particularly in industries such as marketing and entertainment. So, there is hope. It’s just, you know. Complicated. 📊 Balancing future applications and risks

| Area | Opportunity | Risk |

|---|---|---|

| Entertainment | Realistic CGI, virtual actors | Misuse of likeness |

| Business | Personalized marketing | Fraud, impersonation |

| Education | Interactive learning tools | Misinformation |

| Politics | Voter engagement tools | Election interference |

So… what do we get by 2030? Well, in summary, I’d say deepfakes just become normal. Not in a sensational, headline grabbing way. Just a bland, everyday kind of way. Like filters. Like airbrushed photos. Just the digital landscape.

But the trade-off is that we see more creativity, more productivity, and more uncertainty. And perhaps that’s what actually changes. Not that fake media exists. But the loss of certainty around what’s real. Weird huh?

11. Year-over-year growth rate of deepfake content

Deepfake content has been growing exponentially, with some reports showing annual increases of over 500%. This rapid acceleration is driven by easier access to AI tools and computing power. The barrier to entry is now lower than ever. As a result, synthetic media is becoming a mainstream phenomenon rather than a niche experiment.

12. The percentage of deepfakes created using open-source tools

A significant portion of deepfakes are now produced using freely available, open-source software. This democratization has made advanced manipulation accessible to non-experts. Users no longer need deep technical knowledge to generate realistic results. This trend is fueling both creativity and misuse at scale.

13. Average time required to create a convincing deepfake

What once took days can now be done in hours-or even minutes. Improvements in AI models and hardware acceleration have drastically reduced production time. Some tools offer near real-time face-swapping capabilities. This speed makes deepfakes more practical for widespread use.

14. The rise of real-time deepfakes in video calls

Real-time deepfake technology is becoming increasingly viable in live video environments. This allows users to alter their appearance during calls or streams instantly. The implications for fraud and impersonation are significant. It also raises concerns about identity verification in remote settings.

15. Percentage of people unable to distinguish deepfakes from real content

Studies suggest that a large portion of viewers struggle to reliably identify deepfakes. Even when warned, many people fail to detect subtle manipulations. This highlights the growing sophistication of AI-generated media. It also underscores the importance of digital literacy.

16. Deepfake usage in entertainment and media production

Not all deepfakes are malicious-many are used in film, TV, and advertising. Studios use them for de-aging actors or recreating performances. This can reduce production costs and expand creative possibilities. However, it also blurs the line between real and synthetic performances.

17. Corporate deepfake attacks: percentage of targeted companies

An increasing number of businesses report being targeted by deepfake scams. These often involve impersonating executives to authorize fraudulent transactions. The financial and reputational risks are growing. Companies are now investing more in verification protocols.

18. Average financial loss per deepfake scam incident

Deepfake scams can result in significant financial losses per incident. Some cases have reported losses in the hundreds of thousands or even millions. The precision of impersonation makes these scams particularly effective. Victims often realize too late that they’ve been deceived.

19. The role of deepfakes in identity theft cases

Deepfakes are becoming a tool in modern identity theft schemes. Criminals can create synthetic videos or audio to bypass verification systems. This adds a new layer of complexity to cybersecurity. Traditional identity checks are no longer always sufficient.

20. Growth of deepfake detection startups and funding

Investment in deepfake detection technology is rising rapidly. Startups focused on AI verification and authenticity tools are attracting significant funding. This reflects growing demand from governments and enterprises. The arms race between creation and detection is intensifying.

21. The percentage of deepfakes removed by major platforms

Social media platforms are increasingly moderating deepfake content. However, only a fraction is detected and removed proactively. Many deepfakes still circulate widely before being flagged. This lag creates challenges in limiting their impact.

22. Geographic hotspots for deepfake creation

Certain regions are emerging as hubs for deepfake production. This is often linked to access to technology and online communities. However, the global nature of the internet makes attribution difficult. Deepfakes are truly a borderless issue.

23. The impact of deepfakes on public trust in media

The rise of deepfakes is eroding trust in digital content. People are becoming more skeptical of what they see and hear online. This phenomenon is sometimes called the “liar’s dividend.” It allows even real content to be dismissed as fake.

24. Percentage of deepfakes involving synthetic audio vs video

While video deepfakes get most attention, synthetic audio is also rapidly growing. Voice cloning technology is now highly accessible. In some cases, audio deepfakes are even harder to detect. This makes them particularly dangerous for scams.

25. Deepfake prevalence in online dating and social platforms

Deepfake images and videos are increasingly appearing in dating apps and social media. Some users create entirely synthetic personas. This raises concerns about authenticity and deception in online relationships. Platforms are beginning to address this issue.

26. The average lifespan of a viral deepfake online

Some deepfakes spread rapidly and gain traction within hours. Their viral nature makes them difficult to contain once released. Even after removal, copies often persist. This highlights the challenge of controlling digital misinformation.

27. Percentage of deepfake content flagged by AI vs humans

Detection efforts rely on both automated systems and human moderation. AI tools can scan vast amounts of content quickly. However, human reviewers are still needed for context and accuracy. The balance between the two is still evolving.

28. Legal actions taken against deepfake creators

Governments are starting to introduce laws targeting malicious deepfake use. However, enforcement remains inconsistent across regions. Legal frameworks are still catching up with the technology. This creates gaps that bad actors can exploit.

29. Public awareness levels of deepfake technology

Awareness of deepfakes is increasing, but understanding varies widely. Many people have heard of the term but don’t fully grasp its implications. Education is key to reducing vulnerability. Awareness campaigns are becoming more common.

30. The projected market size of deepfake technology

The deepfake and synthetic media market is expected to grow significantly by 2030. This includes both creation and detection technologies. Industries from entertainment to cybersecurity are investing heavily. The economic impact of this technology is just beginning to unfold.

Conclusion

So after sifting through all of that, what can we conclude? Well, for starters, deepfakes aren’t going anywhere. Whether you like it or not, this is something we’ll be seeing more of. The numbers aren’t just increasing, they’re increasing at an increasing rate. They’re evolving.

They’re being weaponized. But they’re also being innovated. They can be used for good, if managed properly. The scales, however, are tipping. And it seems as though we haven’t quite found a comfortable equilibrium yet.

Perhaps the most important message isn’t about the tech itself, but rather how we should react to it. Remaining educated, remaining critical and not blindly accepting information as fact can’t be stressed enough.

In an age where anything can be made to appear as anything else, a critical eye might be the best tool we have.