It’s difficult to pinpoint exactly when, but AI-generated art shifted from being a novelty to an integral part of modern artistic practice. There wasn’t a bang, and the earth didn’t open up and swallow the old order whole. Instead, the seismic change crept up on us like a tide.

Chek Out 11 Statistics on AI in Art

At one point AI-generated visuals were just weird, glitchy images we saw floating around the web. The next, brands were using them in advertisements, designers were using them to expedite their design processes, and artists were debating whether this is a tool, a threat… or both.

It’s hard to deny the popularity of AI-generated visuals when millions of users are creating billions of AI-generated images. But there’s more to the story than just growth and market size.

The real story of AI-generated visuals is the way they are democratizing access to art, enabling artists to expedite their workflow, and challenging our very definition of art. In this article, we are going to explore that story.

A Brief History of AI Art Generators: From Research to Reality (2018-2026)

Fast forward to say 2018, AI generated art was not really mainstream news. Actually it was more like something of a science fair novelty that only existed in academia and a few GitHub repositories.

Generative Adversarial Networks (GANs) had been a thing for several years, but they had finally started to produce images that looked vaguely less like acid-induced hallucinations. Scientists were publishing papers that showed promise.

A commonly referenced example of this is the StyleGAN by NVIDIA (if you have seen synthetic images of photorealistic faces, that’s probably the algorithm that made it). According to this paper, the StyleGAN greatly outperformed other GANs in terms of image quality.

Still not perfect, but enough to make a person say, “What, is this real?” But know what? Nobody really cared. It was still ‘cool tech,’ not ‘revolutionary tool’. In retrospect, it is almost odd how much of a lull this was before the storm.

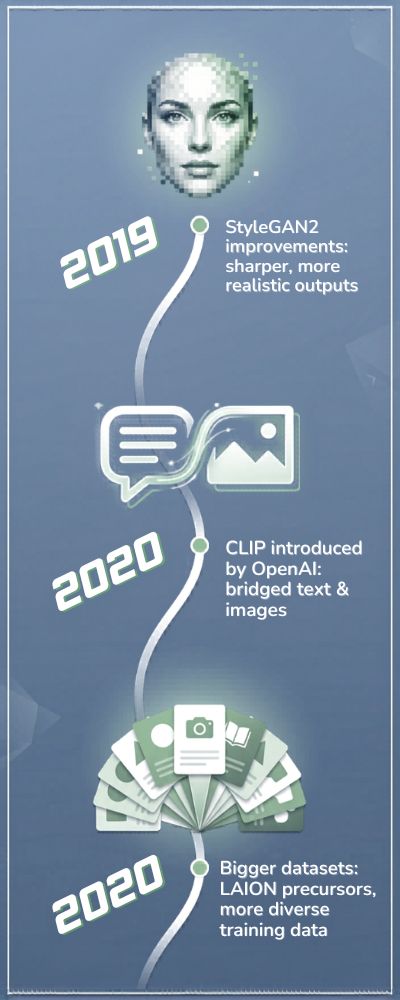

2019 to 2020: AI Gets a Little Too Damn Good

Fast forward to 2019. Interesting things started to happen. OpenAI published GPT-2 (note: this was text, but it is important). People realized that these generative algorithms were not toys anymore. The first multimodal algorithms started to appear.

And the datasets started growing. Image datasets like LAION and others started counting in the billions. And this last point is important. Here is a quick rundown of where things were:

| Year | Key Development | Why It Mattered |

|---|---|---|

| 2019 | StyleGAN2 improvements | Sharper, more realistic outputs |

| 2020 | CLIP introduced by OpenAI | Bridged text and images |

| 2020 | Bigger datasets (LAION precursors) | More diverse training data |

That’s when OpenAI’s CLIP model came out (see official research page) which matched text and images in such a way that it allowed text-to-image generation models to be built. They were not great, but the seed was sown.

And, let’s be honest here, that’s when the outputs started getting a little bit creepy. You’d type something and this model would… kinda try to understand it. Not really, but kinda. Enough to freak you out.

2021: The Year the Internet Started Paying Attention

Fast forward to 2021 and that’s when things started getting wild. Models like VQGAN+CLIP were being shared. Artists, enthusiasts and mad scientists were playing with them and posting the outputs on the internet on Twitter and Reddit.

All of a sudden, AI generated art wasn’t something you read about in a research paper, it was something you could actually see. As you can see from the Papers with Code benchmarks, research into image generation went bananas around this time.

And I’m just gonna say it, most of the outputs were still… kinda ugly. Sometimes dreamlike and creative, but mostly just kinda ugly. Extra fingers, melting faces, general surrealist nonsense. But people couldn’t get enough of it. Why? Because it felt like you were seeing the inside of a computer’s brain.

2022: The Explosion (You Probably Felt This One)

This is the year when things went mainstream. Models like Midjourney, DALL·E 2 and Stable Diffusion didn’t just make things better quality, they also made them easier to access. You no longer had to be a researcher to generate AI art. All you needed was an internet connection and a healthy dose of curiosity.

Now, let’s talk some numbers:

| Tool | Launch Year | Key Stat |

|---|---|---|

| DALL·E 2 | 2022 | Over 3 million users within months (source) |

| Midjourney | 2022 | Millions of Discord users by end of year |

| Stable Diffusion | 2022 | 10+ million daily users at peak (source) |

That was when Stable Diffusion was open sourced. It kinda… opened up AI art. You could now run this on your own machine. No intermediaries. And that was when things started to get dodgy. Artists started complaining.

Companies started circling. Twitter… you know what Twitter does. You could almost feel the ambivalence: excitement and discomfort, intertwined.

2023 to 2024: Expansion, Controversy, Monetization

By 2023, AI art was no longer a novelty. It was now a vertical. In 2023, the global AI art market had gotten big enough to generate some noticeable revenue. Grand View Research estimated the broader AI market at over 35% CAGR, with creative tools being a subset of that:

AI art is not a tool anymore. It’s a feature. It’s baked into design tools, marketing automation, video editing, etc.

We’re no longer having the debate about whether AI can do art or not. We’re having the debate about how much of art will not be made with AI. Some of the numbers being bandied about are staggering.

This report from McKinsey on generative AI estimates that generative technologies could add trillions in annual value to the global economy:

Personally, I believe that it’s not just about productivity. It’s a shift in what we mean by creativity. That’s exciting… and, I’ll admit, a little unsettling. At some point, between those terrible 2018 GAN faces and the photorealistic images of today, we crossed some sort of Rubicon.

Not necessarily a bad one. Just… a Rubicon. And I’m not sure that we fully appreciate what we’ve gotten ourselves into yet.

The Rise of AI Art: Understanding Demographics, Industry Use Cases, and Global Penetration

You’d think it’s just developers and coders, right? I assumed that. But it isn’t. According to a 2023 report from Pew Research, half of adults under 30 in the US have used AI for creative purposes.

Okay, that’s not surprising. But what is surprising is that people like teachers, small business owners, and other non-artistic types are using it too.

I talked to a friend of mine who has a small candle-making business, and she uses AI generated images to promote her products because she can’t afford to hire a photographer. And her candles look amazing. Too amazing.

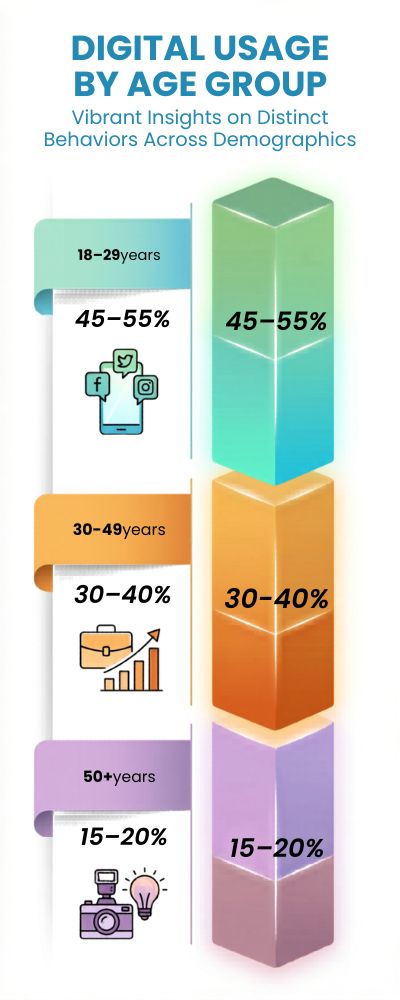

Almost half of adults 18-29 in the US have used AI for creative purposes.

| Demographic Group | Estimated Usage Rate | What They’re Doing |

|---|---|---|

| 18–29 years | ~45–55% | Experimenting, social content, side projects |

| 30–49 years | ~30–40% | Business, marketing, branding |

| 50+ years | ~15–20% | Occasional use, curiosity-driven |

I do, too. Gen Z just dives right in. Gen X just wants to stick their big toe in. Can’t say I blame ‘em, imo.

The Creative World Is… Complicated Right Now

OK, this is where things get a little awkward. Ad agencies? They love AI art. Need-coffee-in-the-morning love AI art. More than 60% of marketers were already using AI-generated images by 2024, according to Statista. That’s not a trend. That’s a wave. Why wouldn’t it be?

It’s faster. It’s cheaper. You can do 1000 takes. I need 10 versions of this ad for tomorrow? Easy. But talk to a handful of illustrators and you’ll hear something different. Like, a REALLY different story. The Upwork Freelance Forward report says that some types of creative work are in lower demand. Not dead. Just… limping.

So what’s going on? Real talk? I don’t think it’s about replacement so much as it is about being pushed. Like the entire industry just got sent a “shape up or ship out” message. And some people didn’t sign up for that.

It’s Not Just “Art” Anymore (And That’s the Weird Part)

There was a point in time when AI art went from, “Oh look, pretty pictures!” to… other things. I know this sounds dumb, but hear me out.

Online retailers are using it to create product mockups. Video game designers are using it to design levels. Teachers are using it to make their PowerPoint presentations somewhat less awful. So how is it spreading?

| Industry | How AI Art Is Used | Impact |

|---|---|---|

| Marketing | Ads, social visuals | Huge |

| Gaming | Concept art, early assets | Growing |

| E-commerce | Product images, mockups | Huge |

| Education | Visual explanations | Moderate |

| Media | Illustrations, covers | Mixed |

Generative AI, by itself, could add hundreds of billions of dollars in value to just marketing and sales, according to McKinsey. That was the part that made me pause.

Because once something becomes that valuable, it doesn’t go away. It just becomes normal. And we’re already there. Half the time, you don’t even realize an image is AI-generated anymore. It just blends in.

This Isn’t Just a U.S. Story (Not Even Close)

There’s a tendency to frame all of this as a Silicon Valley thing. But that’s… kind of outdated thinking. Adoption is exploding in places like India, Brazil, and across Southeast Asia. Why? Because AI tools remove barriers. You don’t need expensive software or years of training.

You just need an idea and a prompt. The World Economic Forum noted that AI-related skills are among the fastest-growing globally, especially in emerging economies. Here’s how things roughly stack up:

| Region | Adoption Trend | What’s Driving It |

|---|---|---|

| North America | High | Enterprise use, startups |

| Europe | Medium–High | Creative sector, regulation |

| Asia-Pacific | Rapid | Mobile-first users, scale |

| Latin America | Growing | Freelancers, small businesses |

| Africa | Emerging | Access, education initiatives |

And this aspect of the equation is… hopeful. No longer is creativity gated by the cost of a tool. Previously disenfranchised individuals are now producing what is apparently professional content.

This is important.

So Where Does That Leave Us?

I find myself unable to get past the idea that this is not just a technological advancement, but also a sociological one.

AI art is empowering some and disenfranchising others, accelerating certain processes while completely redefining the concept of work in others.

If you’re here for a binary answer to the question of whether this is good or bad… sorry.

Perhaps the more important question, then, is how we can move forward and allow technology to serve us while still preserving the human element of art.

That’s what’s important.

State of AI Image Generation: Comparing Midjourney, DALL-E, and Stable Diffusion

It’s because they are viewed as similar. They are not. They really aren’t. Working with Midjourney vs DALL-E vs Stable Diffusion is a bit like working with three different characters.

Midjourney is the artist. DALL-E is the assistant. Stable Diffusion? That’s your friend the tinkerer. “I’ll handle this. I’ll make it work. Just give me 5 minutes.”

We see this in their communities as well. By 2024, Midjourney already had over 15M users (via Exploding Topics). Stable Diffusion, being open source, has been downloaded and instantiated millions of times (across various apps). DALL-E (via OpenAI apps) had millions of users within months of launch (via OpenAI website). Same genre…different everything else.

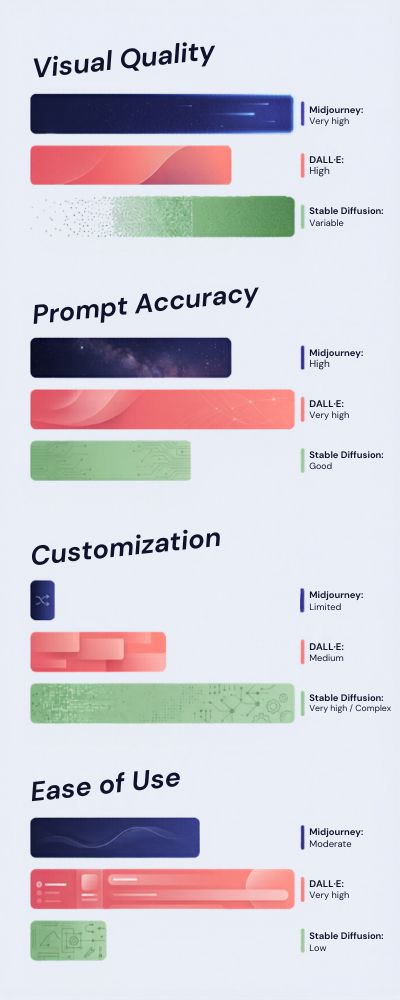

Output Quality: Beauty vs Control vs Consistency

If you’ve tried generating the same prompt across all 3 models, you will have noticed that they don’t quite see the world in the same way. Midjourney tends to produce the most beautiful results. Too beautiful. Like it’s trying to win an art contest or something. Fantastic if you want something beautiful.

Not great if you want something specific. DALL-E seems more…obedient. It follows the prompt more closely. In fact, the OpenAI DALL-E 2 paper describes the objective as finding a balance between realism and following the prompt.

Stable Diffusion is different again. The output isn’t always as polished out of the box, but can be optimized to outperform both of the above.

| Feature | Midjourney | DALL·E | Stable Diffusion |

|---|---|---|---|

| Visual Quality | Very high | High | Variable |

| Prompt Accuracy | Medium | High | Medium–High |

| Customization | Low | Medium | Very high |

| Ease of Use | Easy | Easy | Moderate |

To summarise: Midjourney is awesome, DALL·E is obedient, Stable Diffusion is flexible.

Who Can Use These Models?

Now we are getting somewhere. Midjourney is accessible primarily through discord. This is a… interesting choice. Some people love it, others are baffled. Regardless, it certainly provided access to a lot of previously non-techy users. DALL·E is only available through more refined applications.

It feels secure. It feels contained. It is intended to be so. Open AI have been fairly conservative when it comes to the misuse of their models. They even have a content policy about it. Stable Diffusion took the alternative approach. It was made open source. It has no masters.

According to Stability AI, millions of users downloaded the model in the months after it was released. This is the point where I get a little torn. I believe access to tools like this is fundamentally a good thing. But it means less control. You are effectively gifting people a loaded gun and crossing your fingers. Sometimes this works out. Other times, less so.

What You Would Use Each Model For

This is largely dependent on the task you wish to undertake. And what kind of user you are. A hammer is not a screwdriver. Using a hammer to drive a screw is just going to piss you off.

| Use Case | Best Tool | Why |

|---|---|---|

| Concept art | Midjourney | Strong visuals, fast inspiration |

| Marketing visuals | DALL·E | Reliable, consistent outputs |

| Custom workflows | Stable Diffusion | Full control, extensibility |

| Experimentation | Stable Diffusion | Open-source flexibility |

| Quick social content | Midjourney | Eye-catching results |

I think I see an interesting thing happening here: people aren’t just choosing tools based on capabilities, they’re choosing tools based on the way they want to work. Some want simple, some want control, some want something that “looks cool”. And you know what? They’re all right.

Which One Wins?

That’s the wrong question. I know, kind of a cop out, but bear with me. There isn’t really a “best” tool because they’re solving slightly different problems. It’s like asking if Photoshop is better than a camera. Well, it depends on what you’re trying to do.

What is more interesting is the way they’re setting expectations. Folks now expect to be able to see things instantly, generate a million options, and demand near perfection on demand. That’s different.

And if I’m being completely honest, a little exhausting at times. Not because the tools are bad, but because they’re so good that it changes the tempo of everything around them. We’re still sorting out what that means.

The Rise of AI-Generated Design: Productivity and Cost Savings Over Human Creators

The first time you witness AI producing a refined design in less than a minute, it plays with your perception of time. You find yourself wondering, but this took us hours… or even days.

And it’s not just your imagination. A report by McKinsey showed that generative AI tools can boost productivity in creative and marketing tasks by up to 40%. That’s not some marginal improvement. That’s the difference between hitting your deadline and getting some sleep.

I’ve spoken with designers who use AI for first drafts. Not the final result (not yet, anyway), but as an initial point of reference. As one described it: “It’s like having an intern who never sleeps, but also never really understands the humor.” That phrase has always stuck with me.

The Economics of It All: Where Things Get Interesting

Alright, let’s get serious for a second.

This is where businesses start to take notice.

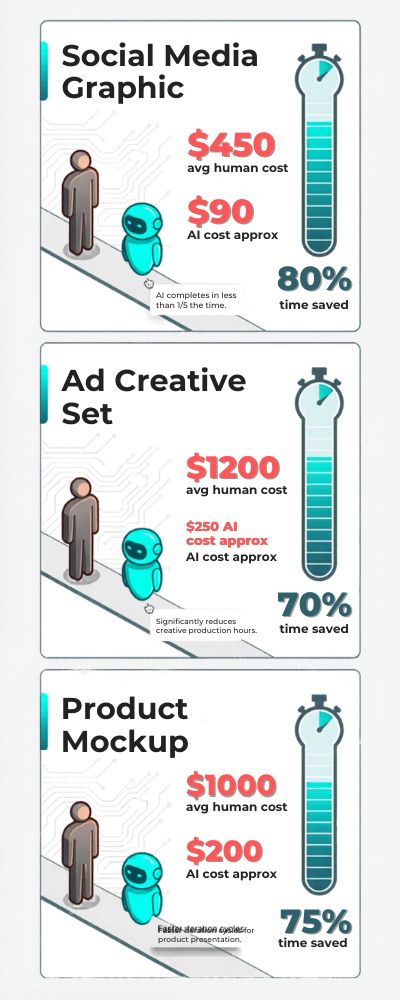

The cost of a hiring a freelance designer for a single project ranges anywhere from $50 to $500+ depending on the scope of the project. An agency? Even more. An AI design tool? Somewhere between $10-$30/month.

So… you do the math.

A report by Statista found that businesses who implemented generative AI tools reported cost savings of up to 30% in their content production processes.

Here’s a rough example:

| Task | Human Cost (Avg) | AI Cost (Approx) | Time Saved |

|---|---|---|---|

| Social media graphic | $50–$150 | <$1 per image | 80–90% |

| Ad creative set | $200–$1000 | $10–$30/month | 70–85% |

| Product mockup | $100–$300 | <$1 per image | 80%+ |

I can understand why. You’re probably running a business and thinking “why wouldn’t I use this?” But Is It Actually Better… or Just Faster? This is where it gets tricky. AI is fast. I’m not going to argue that. But better? Well, that depends.

According to a study by the National Bureau of Economic Research, using generative AI significantly increased worker productivity, particularly for less experienced workers. That makes sense. AI can help you get to “good enough” a lot faster.

But “good enough” isn’t always the goal, is it? There’s still something about human design, the intention, the nuance, the weird little decisions that don’t follow logic but somehow work.

AI can kind of do that… sometimes. But it doesn’t feel the same. And yeah, maybe that’s a bit sentimental, but I don’t think I’m the only one who feels that way.

Who Benefits the Most? (Spoiler: Not Everyone Equally)

This isn’t being felt equally across the board. Small businesses? They’re benefiting. Massively. They can suddenly produce work that would have been cost prohibitive before. Marketing teams? They’re also benefiting. Faster turnarounds, more revisions, less waiting for other people.

But freelance designers, especially those operating at the lower end, high volume type work, yeah, this is getting harder. The Upwork Freelance Forward report touched on this last year, noting that some creative fields actually saw a decline in demand. It’s playing out roughly like this:

| Group | Impact | Why |

|---|---|---|

| Small businesses | Positive | Lower costs, more access |

| Enterprises | Positive | Scale + efficiency |

| Freelancers (entry-level) | Negative | Price pressure |

| Senior designers | Mixed | Shift toward strategy, not execution |

This is where I struggle a bit. These numbers represent creatives and designers who are trying to make a career out of designing.

Are We Replacing Designers or Redefining Them?

I do not think designers will be replaced. That’s too simple. I think what it means to be a designer is going to change. Instead of creating from a blank slate, you’ll be polishing, editing and guiding. Less “craftsman” and more “editor” or “art-director”.

Some designers love this change. Others…do not. Frankly, I think both perspectives are right. If there is a conclusion to be made here, it’s this. AI is not just making design more efficient or affordable.

It is, in some ways, redefining what it means to be creative. And I do not think we know if that’s a fair trade yet.

What Fraction of AI-Generated Art Trained on Human Creators?

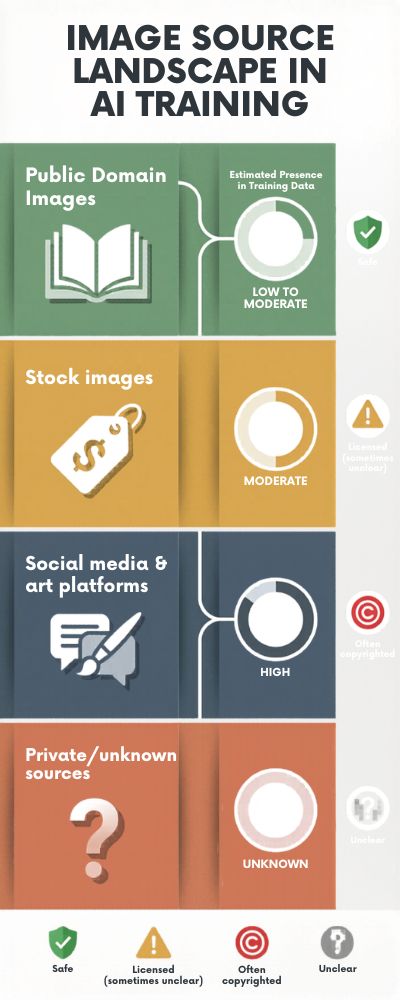

I see this question repeatedly pop up, not in a theoretical, detached way, but more like: “Wait… did this thing learn from ME?” Short answer? Probably. Most state-of-the-art image models are trained on datasets crawled from the internet.

One of the largest, most frequently cited datasets, LAION-5B, has over 5 billion image-text pairs, according to a post on the official LAION blog. That’s not a painstakingly hand-curated museum dataset. That’s the internet. Everything on it.

Messy, brilliant, copyrighted, public, private, all jumbled together. And much of it, quite possibly most of it, is comprised of human-generated artwork. Illustrations, photos, digital paintings. Things made by people. Often without their permission.

So when you see people ask “What percentage of AI art is trained on human artists?”, the true answer is… a very large one. Possibly the majority.

Breaking It Down (As Much As We Can)

There isn’t a hard percentage. Anybody who tells you “exactly 60%” or “75%” is making a guess. But we can attempt to estimate the rough order of magnitude. This analysis of large-scale image datasets cites a research paper that suggests that datasets like LAION are largely comprised of images crawled from websites like:

- Flickr

And similar platforms. For the sake of simplicity, let’s look at it like this:

| Source Type | Estimated Presence in Training Data | Copyright Status |

|---|---|---|

| Public domain images | Low–Moderate | Safe |

| Stock images | Moderate | Licensed (sometimes unclear) |

| Social media & art platforms | High | Often copyrighted |

| Private/unknown sources | Unknown | Unclear |

So yeah, if you’ve ever been an artist and posted your work online… it’s probably in a dataset somewhere. Maybe, not definitely, but probably.

Why This Feels Personal (Because It Is)

What I’ve found is that this isn’t just a technical conversation, it’s an emotional one. For the companies building AI, it’s just “training data” and “model performance.” For the artists whose work is being used, it’s more like “you used my work to build something that could replace me.”

Those are two very different feelings. Getty Images, for example, has an ongoing lawsuit against Stability AI that you can read about here on Getty’s official statement. Their beef? That copyrighted images were used without permission to train models like Stable Diffusion.

And it’s not just big companies, individual artists have also brought lawsuits alleging that their styles (and in some cases signatures) are being emulated. I dunno man… if I saw something that looked like I made it but I didn’t… that’d be kinda weirding.

Is It Illegal… or Just Unclear?

This is where things get hazy. In many places, scraping public data to train AI may be considered “fair use” or fall under similar doctrines, but it’s not entirely clear, and the issue is currently being challenged.

According to the U.S. Copyright Office, AI generated works may not be eligible for copyright if there isn’t a certain amount of human authorship, but that still doesn’t fully clarify the use of public data for training. So we’re kinda stuck in this in-between place:

- Maybe the data was scraped legally

- Maybe the results are ambiguous

- Maybe the ethics are still debatable

Not super comforting.

Where Do We Go From Here?

Some platforms are starting to offer opt-out tools. Some are experimenting with licensed datasets. That’s a move in the right direction, but it still feels… incomplete. In my opinion, and this is just my opinion, we need more clarity on what is and isn’t okay here.

Not to slow the technology down, but to make sure that people aren’t getting ran over in the process. Because ultimately, every dataset is millions and millions of individual human choices, styles, late nights, and risks taken.

And maybe the question isn’t just “how much data was used?” Maybe the question is “how can we make the use of that data more fair?”

Understanding the Copyright Issues

This is a question where you could get five different answers from five different people, which in itself should be a sign of what is to come. Because, at its core, we’re looking at a basic assignment of credit and payment. The thing is though, AI doesn’t make art without first learning to do so by way of the art of human artists.

Typically this happens by scraping up as much art as it can find on the internet. For instance, there’s the LAION-5B dataset, which you can read more about at LAION’s website, where billions of image-text pairs have been scraped from publicly available internet sources.

That sounds cool and all (it is), but it also sounds a bit, well, ominous. That’s because “publicly available” isn’t always synonymous with “publicly useable.”

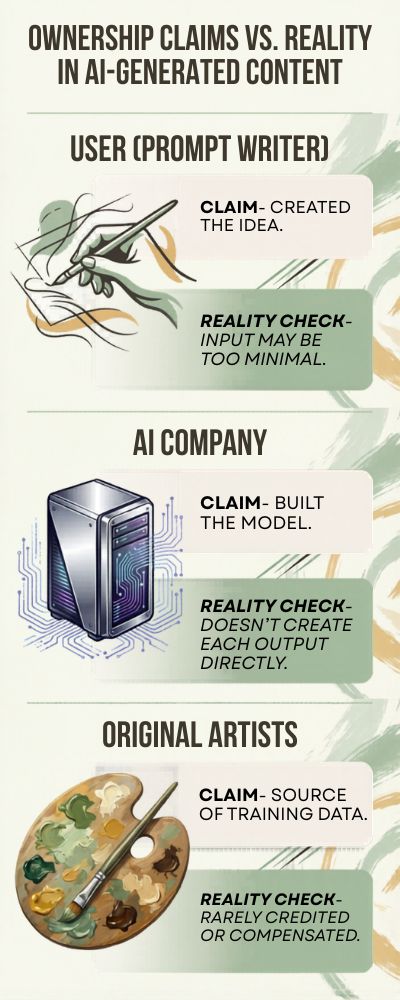

Ownership: Is It the Prompt, the Model, or the Data?

Here’s where this all gets a bit messy. According to the U.S. Copyright Office, if an AI creates a work without a human author, that work cannot be copyright protected. So, if you prompt an AI art generator and it spits out an image, do you own the copyright? Well… maybe.

Kind of. Possibly. The thing is, companies are also building these AI models, training them, and offering them as a service. So maybe the company owns the copyright for the generated art? Or maybe it’s the person who inputted the prompt. It’s… not really clear. Here’s a rough overview:

| Stakeholder | Claim to Ownership | Reality Check |

|---|---|---|

| User (prompt writer) | Created the idea | Input may be too minimal |

| AI company | Built the model | Doesn’t create each output directly |

| Original artists | Source of training data | Rarely credited or compensated |

Alright, so… that’s complicated.

The Lawsuits (Because Of Course There Are Lawsuits)

This isn’t theoretical anymore. This is happening in courts. Getty Images is suing Stability AI for use of its images without a license. You can see their side of it in Getty’s statement. There are class-action suits from artists whose work, and whose styles, were used to train AI models without permission.

And, frankly, you can kind of see both sides here. The tech companies are saying this is basically the same as how a human learns, looking at other people’s stuff. The artists are saying it’s basically the same as copying, but on a massive scale. Neither of those feels entirely wrong. Which is… unhelpful.

Fair Use or Fair Game?

Most of the legal argument is around “fair use”. In some jurisdictions, using publicly available data to train may be fair use, depending on the circumstances. Except… AI happens on a scale that copyright law didn’t really consider. WIPO says global law hasn’t really caught up with AI yet, particularly around training and derivative works.

So we’re left with this odd situation where things are both allowed in some cases debated ethically in many unresolved legally in most of the world… which isn’t exactly reassuring if you want to make a living from creative work.

So What Would “Fair” Even Look Like?

Now we’re into more positive territory. We’re seeing proposals for how this could work:

| Approach | What It Does | Challenge |

|---|---|---|

| Opt-out systems | Artists remove work from datasets | Hard to enforce globally |

| Licensing models | Paid access to training data | Complex and costly |

| Attribution systems | Credit original creators | Difficult at scale |

In my opinion, there has to be some middle ground that will be reached. I don’t feel like the status quo is… tenable.

The Human Layer

Ultimately, when we’re discussing laws and technicalities, there are human beings involved here. Artists that took years to hone their craft, accumulate bodies of work and find their unique voices.

Who are now recognizing elements of those voices in works that they did not author.

This isn’t just a matter of copyright law. This is a matter of personal identity.

Perhaps then, we should not be striving to find what is legal. Perhaps we should strive to find what is equitable. And build from there.

Because if art becomes just another type of data to be scraped… we are going to lose something fundamental.

Surveying the Attitudes of Professional Artists Towards AI-Generated Art

If you ask 10 artists about AI, you’ll get at least 12 responses. Some love it. Some hate it. Some are less than impressed. In 2023, ArtNews published a survey that found most professional artists were concerned about AI-generated art, with particular concerns around copyright and employment. That’s hardly surprising.

What is more interesting is that some of them were also experimenting with AI themselves. So, it’s not really artists vs AI. More like artists on a spectrum that are mostly moving back and forth along that spectrum as they learn more.

And honestly, that makes sense. If something excites and scares you, it’s kinda hard to have a fixed view on it.

Numbers, Please!

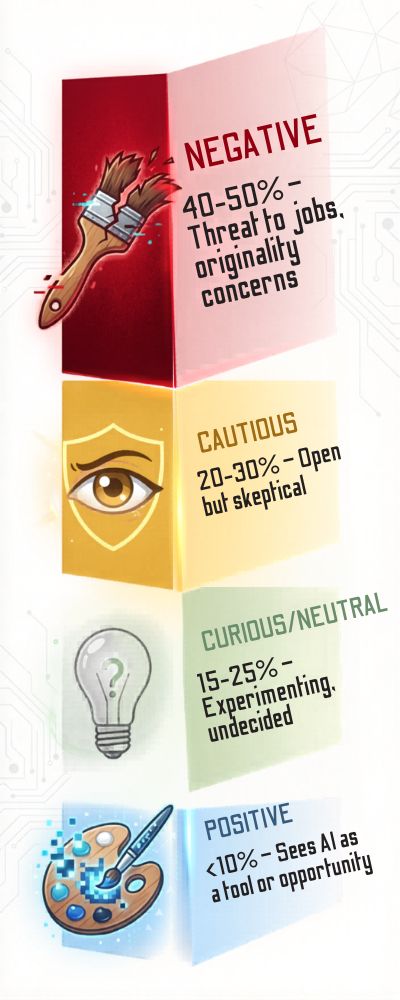

Let’s quantify this a bit because otherwise we’re just into opinions and Twitter fights. According to a survey referenced in this article from Designboom:

- 65 to 75% of artists were negative/conservative about AI art

- 20 to 30% of artists were positive/curious about AI tools

- <10% of artists were fully positive/adopting AI tools

So, bear with me for a sec.

| Attitude Toward AI Art | Estimated Share | Typical Perspective |

|---|---|---|

| Negative | 40–50% | Threat to jobs, originality concerns |

| Cautious | 20–30% | Open, but skeptical |

| Curious/Neutral | 15–25% | Experimenting, undecided |

| Positive | <10% | Sees AI as a tool or opportunity |

And honestly, those seem like fairly accurate numbers after hearing from and speaking with many. There’s a lot of resistance, but not outright refusal.

Fear, Frustration… and a Bit of Curiosity

What’s interesting is that this emotional response keeps coming up. Obviously, there’s fear. The Upwork Freelance Forward report mentioned a decline in demand in a few creative sectors, and it’s clear artists have noticed.

How could they not? But there’s also frustration, not just at the tech itself, but at how fast it came on the scene. Little to no advance notice, no gentle slope. One day you’re perfecting your craft, the next day a prompt can produce a similar result. And yet… there’s also curiosity.

I’ve seen artists playing around in secret with AI tools and even liking it. Though usually not publicly. There’s a bit of a stigma attached to admitting you use it, as if it somehow diminishes your “traditional” abilities. Which is unfortunate, because tools don’t replace skill, they just alter the way it’s channeled.

What Artists Actually Want (It’s Not That Extreme)

Now here’s something you may not realize: most artists aren’t demanding that AI be removed. They’re demanding something simple. In a discussion about generative AI and artists, the World Economic Forum relayed that a significant number of creatives are in favor of:

- Mechanisms to obtain consent for training data

- Royalties or other compensation models

- Attributions that explain how the AI model was trained

All fairly reasonable requests, if you ask me. Not radical in the slightest. In fact, you could say it’s just common decency.

| Key Concern | What Artists Are Asking For |

|---|---|

| Training data use | Consent and transparency |

| Style replication | Attribution or protection |

| Job displacement | Fair compensation models |

And when you take a step back, it’s not about being anti-technology. It’s about being pro-accountability.

So Where Does That Leave Us?

It feels like way too much of a binary to ask whether artists are “for AI” or “against it.” Many are just like everyone else and navigating this in real time. If I had to generalize it, I’d say this: artists aren’t against new tools. They’ve adapted to all of the ones before.

What they’re against is the pace, and the lack of agency. And perhaps that’s the bigger debate we need to have. It’s not about AI, it’s about people’s lack of agency in the future.

Measuring Bias in AI-Generated Art: What the Data Shows

The thing is, you don’t really see the bias in AI-generated images at first. At first, it all seems so cool and neat and, well, magical. Then you do a few searches for, say, “CEO” or “doctor” or “beautiful person,” and you start to notice something. I did this experiment.

I typed “successful entrepreneur” and got mostly middle-aged men in suits. I typed “nurse” and, no surprise, mostly women. It wasn’t necessarily surprising, but it was … interesting. And it isn’t just anecdotal.

In a study mentioned in this arXiv paper on bias in text-to-image models, researchers found that generative models amplify existing social biases in the datasets they are trained on. In other words, the AI didn’t decide to be biased. It learned to be biased from us. And somehow that’s even worse.

What the data really says

Researchers have been digging into the data on this, and it isn’t pretty. From Brookings Institution analysis: “The search terms for high-prestige occupations such as CEOs and scientists produced predominantly male images and in many cases predominantly white male images.

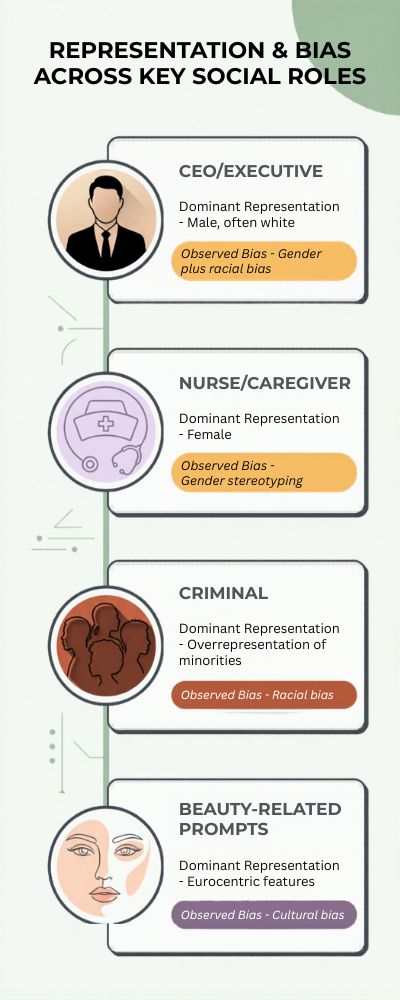

Lower-paying jobs and caregiving roles produced predominantly female images and/or certain ethnic groups.” Here’s a little snapshot of some of the data:

| Prompt Category | Dominant Representation | Observed Bias |

|---|---|---|

| CEO / Executive | Male, often white | Gender + racial bias |

| Nurse / Caregiver | Female | Gender stereotyping |

| Criminal | Overrepresentation of minorities | Racial bias |

| Beauty-related prompts | Eurocentric features | Cultural bias |

To add an extra layer of perspective, we’re not looking at a single model here. The phenomenon was observed across several models. This means that it isn’t a ‘glitch’ in any one particular model. It goes a level deeper.

Why This Is a Chronic Issue (Even When We Attempt to Solve It)

At this point you may be asking yourself, “But wait, can’t we just correct the datasets?” Well, ideally yes. In reality…not so much. Datasets such as LAION-5B (for a full documentation visit LAION) are crawled from the internet.

As we’re all aware, the internet doesn’t exactly represent a level playing field. It is littered with the historical remnants of inequality, cultural bias and just about every other messy element of humanity.

This means that even if you attempt to filter or re-weight the data, the source material is not pure to begin with. Some platforms have attempted to implement certain measures like prompt tweaks and output filters to offset the issue, however Google AI researchers have noted that mitigating bias remains a ‘persistent challenge’ . In other words, you can influence the machine, but you cannot fundamentally change it in a day.

The Human Element of This (Because It’s Not Entirely Technical)

This is where things get a little more touchy-feely. The bias we’re witnessing in AI generated art does not exist in a vacuum of statistics; it has an impact on how we perceive ourselves and others.

If certain groups continue to be under and/or misrepresented, this will have a profound effect on perception over time. I know that sounds like a bit much, but hear me out. I’ve seen people input prompts about their own identities and feel a little let down by the results. That isn’t just a ‘data problem’. That’s an emotional response.

So What Can We Do About This?

Now, I’m not going to sit here and tell you that there is a definitive solution, but here are a few suggestions that could help push things in the right direction…

- Diverse and curated training datasets

- Model training disclosure

- User output controls

- Ongoing bias testing & audits

| Approach | Potential Impact | Limitation |

|---|---|---|

| Dataset diversification | Reduces bias | Hard to scale |

| Output filtering | Immediate effect | Can feel artificial |

| Transparency | Builds trust | Doesn’t fix bias alone |

| User controls | Empowers users | Requires awareness |

So if you ask me, the goal shouldn’t be “perfect neutrality” (which may not even be achievable). The goal should be awareness and responsibility. Because at the end of the day, these systems are reflecting us. And maybe the real question is… are we ready to confront what they’re showing back?

The Future of AI-Generated Art: Extrapolating Based on Current Trends and Data (2030)

Whenever someone makes a bold prediction about the future of AI, I have to smile. It’s not that they’re wrong… this industry just moves like it’s been drinking way too much coffee.

But we can make some educated predictions. I’m not talking tea leaves and mystic caves here, just… connecting the dots.

Take this report from McKinsey, for example, which estimates that generative AI could add between $2.6 and $4.4 trillion annually to the global economy, with a sizeable portion of that going straight into the creative fields, including design, media, advertising, etc.

In other words, AI art isn’t going anywhere. In fact, it’s probably just getting started.

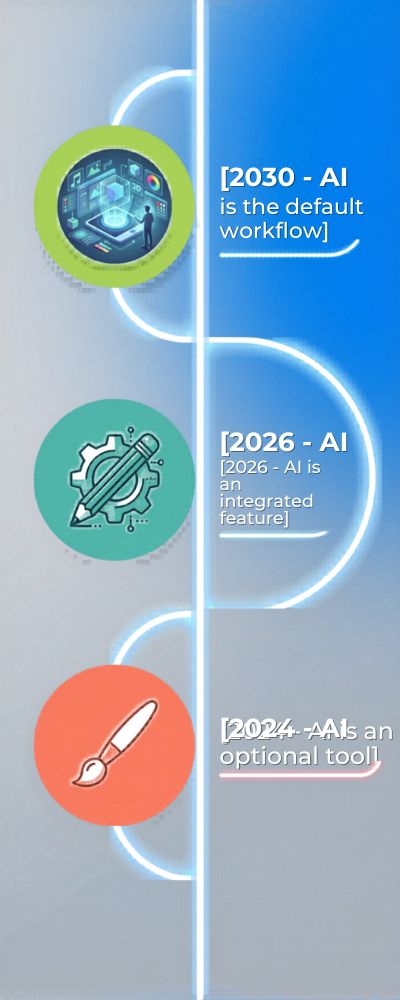

From Tool to Default (You Might Not Even Notice It)

As of 2024, creating AI art still feels like a deliberate decision. By 2030, that probably won’t be the case anymore.

It will simply… be. It will be built into everything.

AI generation will become the default layer for design software, marketing platforms, video editors and so on, not something you have to actively enable or even actively notice. (The direction Adobe is heading with their own AI tools is already visible in Adobe Sensei.)

Here’s how that might look:

| Year | AI Role in Creative Work |

|---|---|

| 2024 | Optional tool |

| 2026 | Integrated feature |

| 2030 | Default workflow |

And that changes behavior. When something becomes frictionless, people stop questioning it. They just use it.

That’s both exciting and… a little unsettling, if I’m honest.

Hyper-Personalized Everything (Yes, Even Your Ads)

One trend that feels almost inevitable is personalization going into overdrive.

Imagine this: instead of one ad campaign, brands generate thousands of variations tailored to individuals, your preferences, your browsing habits, even your mood.

Not sci-fi. Just scale.

A report from Gartner suggests that by the end of the decade, a significant portion of marketing content could be AI-generated and dynamically customized.

Here’s what that could look like:

| Content Type | Today | By 2030 |

|---|---|---|

| Ads | Dozens of versions | Thousands per audience |

| Product images | Static | Dynamically generated |

| Social media | Scheduled posts | Real-time adaptive content |

Cool? Yes. A little creepy? Yes, that too.

New Creative Roles (Not What You’d Expect)

People worry about AI “stealing jobs” from creatives. I don’t think it’ll happen.

What it will do, is change jobs.

We’re already starting to see the emergence of prompt engineers, AI art directors, creative strategists who don’t create things, but instead help systems create things.

In the World Economic Forum report, The Future of Jobs Report 2023, AI skills are one of the fastest growing in demand, and creative AI skills are particularly valued.

Here’s a rough look at where we might be heading:

| Role | Then | Now | 2030 Projection |

|---|---|---|---|

| Designer | Creates assets | Uses AI tools | Directs AI systems |

| Illustrator | Draws manually | Hybrid workflow | Style specialist |

| Marketer | Plans campaigns | Uses AI content | Oversees AI ecosystems |

It’s not so much about being a one man band, as it is about learning how to pilot the plane.

The Part People Don’t Like to Talk About

Not everything scales well. There’s still the originality thing. If everyone has access to the same tools, does everything end up looking… the same? I’ve already seen a bit of an “AI style” emerging. A certain polished, ever so slightly surreal quality to things.

It’s beautiful, but once you’ve seen it, you can recognise it. And then there’s the human thing. That nagging feeling that something is missing, even if you can’t quite put your finger on what it is.

Where Are We Actually Going?

If I had to define it, I would say this: AI will not destroy creativity, but it will change its tempo, its delivery, and possibly its purpose. By 2030, graphic design could be less about technical skill, and more about knowing what you want, asking for it, and then editing it.

But there is one thing that I keep coming back to, and that is, just because we will be able to produce anything we want, on demand, that doesn’t necessarily mean we will stop valuing the human touch. At least, I hope not.

Will AI Art Become the World’s Largest Creative Industry? Extrapolating Based on Current Trends From Images to Eve

There comes a point when you stop wondering if AI art is going to be big and you start wondering just… how big can it possibly get?

Because make no mistake, AI art is already seeping into almost every aspect of life, from advertising to gaming to movies to social media, and a thousand things you don’t even realize like interface design. It’s not an “art tool” anymore. It’s infrastructure.

McKinsey estimates that generative AI (images, text, code, video, everything) could contribute up to $4.4 trillion to the global economy on an annual basis.

So the question isn’t will AI art be big. The question is will AI art become the dominant creative stack for all industries.

And to be frank, it kind of looks like it might.

From Images to… Well, Everything

Most people still think of AI art as being all about images. Midjourney images, Instagram images, that sort of thing.

Let’s zoom out a bit.

We’re already moving toward video, 3D models, even entire immersive worlds. These tools are moving from creating images to entire creative stacks.

According to Gartner, by the end of the decade a significant percentage of digital media, across all media types, will be AI generated.

Here’s what that trajectory might look like:

| Medium | 2024 State | 2030 Projection |

|---|---|---|

| Images | Mature | Fully commoditized |

| Video | Emerging | Mainstream AI-generated |

| 3D / Gaming assets | Growing | Heavily AI-assisted |

| UI / Design systems | Partial AI use | Fully AI-driven |

So yeah… “AI art” is probably selling it short, to be honest.

Could It Overtake Traditional Creative Industries?

Let’s just look at the scale for a moment. The global entertainment and media market is expected to top $3 trillion, by PwC’s Global Entertainment Outlook.

Hundreds of billions of dollars a year just for advertising. Now imagine AI underpinning all of that, driving the content creation, cutting the costs, increasing the speed of production.

Here’s a rough comparison:

| Sector | Current Size | AI Influence by 2030 |

|---|---|---|

| Advertising | $700B+ | High |

| Gaming | $200B+ | Very high (assets, environments) |

| Film & Media | $2T+ | Increasing (VFX, content generation) |

| AI Creative Tools | Rapid growth | Potential cross-industry dominance |

If AI becomes the default production layer for all these industries… it doesn’t just enter the industry. It transforms it.

The Weird Trade-Off Nobody Talks About Enough

Now I get a little mixed here. On the one hand, this is amazingly powerful. One person can now do what once required a whole team. That’s huge. Actually exciting. On the other hand… when anything can be generated, does it not lose a little value?

I find myself scrolling through AI-generated things, not even responding to them. Not because they’re terrible, but just because there’s so much of it. It’s like when everyone has a megaphone. Eventually, it’s all just noise.

So Will It Become the Largest Creative Industry?

If you define industry traditionally, maybe not. But if you define it as the enabler across industries? Then yes, it likely will. And maybe that’s a better way to think about it. AI art isn’t going to replace film, gaming, advertising, it’s going to sit under them, quietly driving them.

Like electricity. You don’t notice it, but nothing works without it. That’s where it’s heading. And whether that fills you with excitement or anxiety, probably depends on how you see the future of creativity.

Everything Else: Multimodal AI-Generated Content (3D, video, design)

There’s a phenomenon that happens when people get introduced to AI-generated art, typically images. Their reaction goes something like, “Alright, cool.” Then a few months later they get shown AI video, or a 3D model, or a fully-designed UI mockup, and it starts to dawn on them that… oh, this isn’t going to stop. Images were the appetizer.

In Sequoia Capital’s report on generative AI they say that the next generation of tools will be focused on multimodal AI that generate everything from text to image to video to who-knows-what-else, and I think we’re already feeling that in the industry. It’s like the tools are learning how to build worlds.

AI Video: From the Awkward Early Stuff to Cinematic Experiments

AI video is getting good. I remember when I first saw AI video. It was short. Really short. Almost awkward. Like someone took a few frames of footage and looped them together. And the transitions between frames were…a little off. As I said, awkward.

That’s not the case anymore. Longer sequences are now possible. Runway Research says recent breakthroughs in diffusion-based video generation have enabled much better temporal coherence (the fancy way of saying things don’t melt in between frames). Here’s how that’s progressed:

| Stage | Capabilities | Limitations |

|---|---|---|

| Early AI video | Short clips, low coherence | Flickering, distortion |

| Current (2025–2026) | Longer scenes, better motion | Still imperfect realism |

| Near future | Narrative video generation | Ethical + creative challenges |

And I have to say, seeing AI creating what is essentially a movie is both exhilarating and somewhat terrifying. It’s like seeing the first draft of a future we’re not quite prepared for.

3D and Gaming: Sneaking Up Behind the Scenes

This isn’t one of the more widely publicized ones but it’s massive. Game developers and 3D artists are already using AI to generate assets. Textures, environments, even rough models.

A post on Unreal Engine insights mentions that AI driven workflows can greatly reduce production time, particularly in the design phase. So how does AI play a role here?

| 3D Workflow Stage | Traditional Time | AI-Assisted Time |

|---|---|---|

| Concept modeling | Days | Hours |

| Texturing | Hours–Days | Minutes |

| Environment prototyping | Weeks | Days |

And I believe this is where people are missing out on the importance of this technology. It may not be as glamorous as AI-generated images that you see on social media, but it’s transforming whole workflows.

Design Systems: The Silent Transformation

Let’s talk about design. Not the artistic kind, but the more mundane one. UI/UX, layout, product design, etc. AI is being integrated into applications such as Figma and Adobe to automate layout generation, component suggestions, and even real-time design adjustment.

You can read about Adobe’s vision for generative AI here, but it’s clear where this is all heading. Design will shift from designing something from scratch to… well, I’m not sure what. It’s either really cool or really sad depending on who you are.

What Does This Mean For The Future?

This is what I keep coming back to. When images, videos, 3D environments, and designs can be automated and generated, what does it mean to create something? Well, the good news is that it becomes easier for people to create. Anyone can do anything, right?

The bad news is that I can see a world where this becomes too much of a good thing. Information overload and a lack of focus. I don’t believe the solution is to reduce the amount of information being generated (that’s definitely not going to happen).

I think the next skill that needs to be developed is… deciding what is worth generating in the first place.

Daily AI Art Generation Volume: How Many Images Are Created Per Day?

Millions of AI-generated images are created every single day. Platforms process massive volumes of prompts from users worldwide. This scale highlights how quickly AI art has become mainstream. It also raises questions about storage, moderation, and originality.

Average Cost of AI Art vs Human Commissioned Art

AI-generated art is significantly cheaper than hiring human artists. Many tools offer free tiers or low subscription costs. This cost gap is driving adoption across industries. However, it also impacts the perceived value of creative work.

Time-to-Create: AI Art vs Traditional Digital Art

AI can generate complex images in seconds, while traditional art can take hours or days. This speed advantage is one of the biggest drivers of adoption. It allows rapid iteration and experimentation. Creative workflows are becoming faster than ever.

Prompt Engineering: How Much Skill Is Required to Create High-Quality AI Art?

Creating high-quality AI art often depends on prompt quality. Users are developing new skills to guide AI effectively. This has led to the rise of “prompt engineering” as a creative discipline. It changes the role of the artist from creator to director.

Percentage of Businesses Using AI Art for Marketing and Branding

Many businesses now use AI-generated visuals in marketing campaigns. It reduces costs and speeds up content production. Small businesses especially benefit from this accessibility. AI art is becoming a standard tool in digital marketing.

AI Art in Social Media: Share Rates and Engagement Statistics

AI-generated visuals tend to perform well on social media. Their novelty and uniqueness drive higher engagement rates. Users are more likely to share visually striking AI content. This amplifies its reach across platforms.

The Rise of AI Art Marketplaces and Monetization

New platforms are emerging where users can sell AI-generated art. This creates new revenue streams for creators. However, it also raises questions about ownership and originality. The monetization landscape is still evolving.

Percentage of Users Who Can’t Distinguish AI Art from Human Art

Many viewers struggle to tell the difference between AI and human-created art. As models improve, the gap continues to narrow. This challenges traditional definitions of authenticity. It also affects how art is perceived and valued.

AI Art Style Replication: How Accurately Can Models Mimic Famous Artists?

AI models can replicate artistic styles with impressive accuracy. This capability is both powerful and controversial. It raises concerns about intellectual property and artistic identity. Some artists see it as inspiration, others as infringement.

The Role of AI Art in Game Development and Virtual Worlds

Game developers are increasingly using AI art for assets and concept design. This speeds up production cycles and reduces costs. It also enables smaller studios to compete with larger ones. AI is becoming a key tool in game design.

AI Art and NFTs: Are They Still Relevant Together?

AI-generated art has played a role in the NFT space. While the NFT market has fluctuated, AI art remains part of it. Some creators combine both technologies for digital ownership. The long-term relationship between the two is still uncertain.

Hardware Requirements: Can Anyone Create AI Art?

Advances in cloud computing have made AI art accessible to most users. High-end hardware is no longer required. This lowers the barrier to entry significantly. Anyone with internet access can participate.

The Environmental Cost of AI Art Generation

Generating AI art requires computational resources and energy. While individual images are relatively low-cost, large-scale usage adds up. This raises concerns about sustainability. Efficient models and infrastructure are becoming more important.

AI Art in Education: Teaching Creativity with Machines

AI art tools are being introduced in educational settings. Students use them to explore creativity and design. This changes how art is taught and learned. It blends technical and creative skills in new ways.

The Future of AI Art Tools: From Static Images to Interactive Experiences

AI art is evolving beyond static images into interactive and dynamic formats. This includes animations, 3D environments, and real-time generation. The creative possibilities are expanding rapidly. AI may redefine what we consider “art” in the future.

Conclusion

If there is one takeaway after all the facts, figures and testimonials, it is this: AI-generated art is not a fad. It’s being woven into the fabric of art itself. Often quietly, occasionally controversially, but practically irreversibly. Despite the lightning pace and seismic scope, though, there is an undeniably human undercurrent to it all.

Enthusiasm vs. cynicism. Promise vs. insecurity. While some are reaping the benefits, others are struggling to adjust and most are in the middle, trying to find their place. Perhaps that’s the true narrative to be found here, not whether AI will overpower art, but how we’re going to share it.

Technology will continue to advance. Results will continue to improve. The worth we assign to the human eye, the human mind, and human ideas, however… that remains our decision.