This piece is not about data; it’s about the way data is interpreted, manipulated, misused, and often mispresented. Correlation vs causality, information obesity, algorithmic bias, and the role of the media are all topics we’ve covered, in the context of where statistical analysis has gone wrong and how it could be improved going forward.

If we’re facing a new decade where AI-based predictions will be used to make hiring, policy and even geopolitical decisions, then it’s imperative we learn how to use and apply data, and how to make sense of it, or else.

Chek Out 11 AI Forecast Statistics

The Hidden Story in the Data: What Most Analyses Get Wrong

Facts, when they’re presented well, are soothing. Numbers add up. Percentages total 100. The ending is a nice one. The problem is, of course, that the real world isn’t that neat. And when data appears too sterile, the chances are it probably is. You’ve probably seen a number of these kinds of headlines over the years.

“Unemployment rate falls to 5!” It’s a great number, for sure… except that the unemployment rate doesn’t include people who’ve given up on finding a job. The World Bank has a dataset on unemployment rates.

A slight variation in the definition for unemployment means a very different picture for a country. So are we looking at reality here, or just a certain version of it?

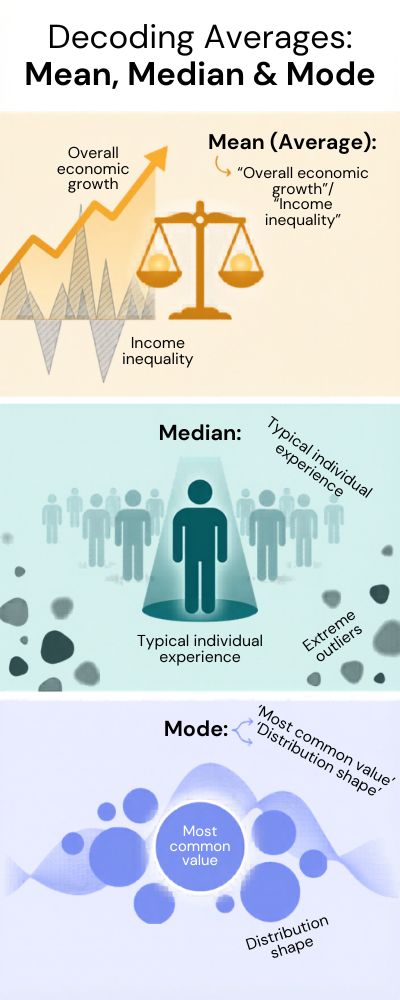

Averages. Averages are the ultimate sugarcoaters. They tell us the truth, but in a way that sounds less bad. For example, if you look at income, the average income in many countries has been steadily rising over the past few decades. That’s a good thing, right?

The OECD has a dataset on income distribution, and we can see that average incomes have been rising steadily over the years. Except that when you look at median incomes, you get a slightly different picture. Wealth is concentrated at the top end.

Here’s a graph of median vs. average incomes:

| Metric Type | What It Tells You | What It Hides |

|---|---|---|

| Mean (Average) | Overall economic growth | Income inequality |

| Median | Typical individual experience | Extreme outliers |

| Mode | Most common value | Distribution shape |

Do you see what I did there? Based on the two, you can make two completely different arguments. And we do, whether we mean to or not.

Correlation: The Misleading Matchmaker

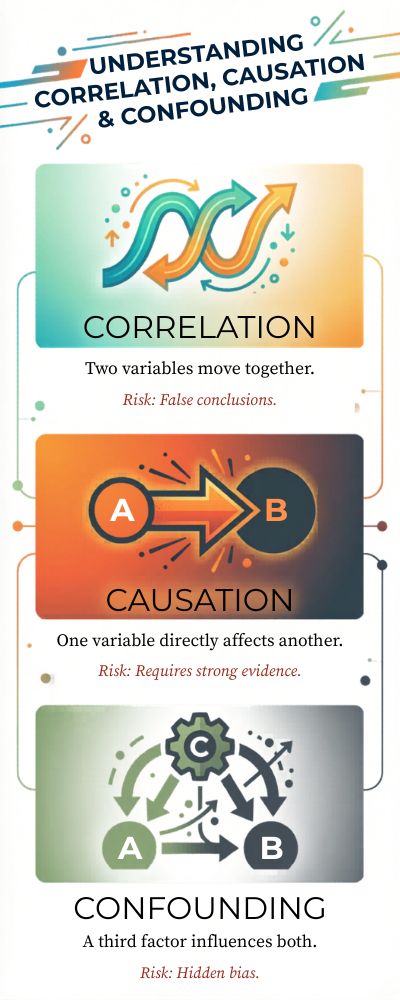

You’ve heard it before, correlation does not equal causation. But damn if we don’t keep falling for it. The classic example from Spurious Correlations is the positive relationship between cheese consumption and death by becoming tangled in your bedsheets. Amusing, right? Except when you realize how often this kind of reasoning slips into real reporting.

The ugly fact is this: humans want explanations. We like cause-and-effect. We like a good narrative. And we’ll invent one if we have to, even if the data is just kinda…shrugging at us.

Missing Data, Missing Truth

Some mistakes are subtle. Sometimes they’re things that are missing, a few values here, a few cases there, a few data points over there that it would be really inconvenient to admit we’re missing. At the beginning of the COVID-19 pandemic, numbers were all over the map.

The Our World in Data COVID dataset showed how different results looked depending on how much each country was testing and what their reporting protocols were.

Let me make this clear: if your data is incomplete, your picture is incomplete. Full stop.

So… What Are We Getting Wrong?

Maybe the problem isn’t in the data at all, maybe it’s in us. We want simple answers. We want them fast. We want headlines that give us a clear picture of what’s going on in a world where nothing is ever clear-cut. And I get it. Nobody wants to see a headline that says, “It depends.”

Except maybe we should.

Because every neat statistic has a story behind it that’s a little messier, a little more nuanced, and a lot more interesting.

From Raw Numbers to Real Narratives: How Statistics Shape the World

You can’t report data. Numbers do not speak. They can’t tell a story. A spreadsheet just is. It doesn’t do anything. It just silently judges you with its rows and columns. When someone comes along and decides what data is important, that’s when the story starts. That’s the human part. It’s subjective. It’s biased. Sometimes it’s inspiring.

If you look at the World Bank data on poverty, you will probably come to the conclusion that extreme poverty rates have dropped over the past few decades. That’s a pretty good story. You can almost hear the soundtrack.

But if you look at that same data and take note of the variance across different regions of the world, and the fact that extreme poverty is measured at $2.15 per day, you might tell a different story. They’re the same numbers, but the narrative is different.

Which one is right? Well, probably both. And that’s where it gets uncomfortable.

The Art of Framing (Or, How to Nudge a Story Without Lying)

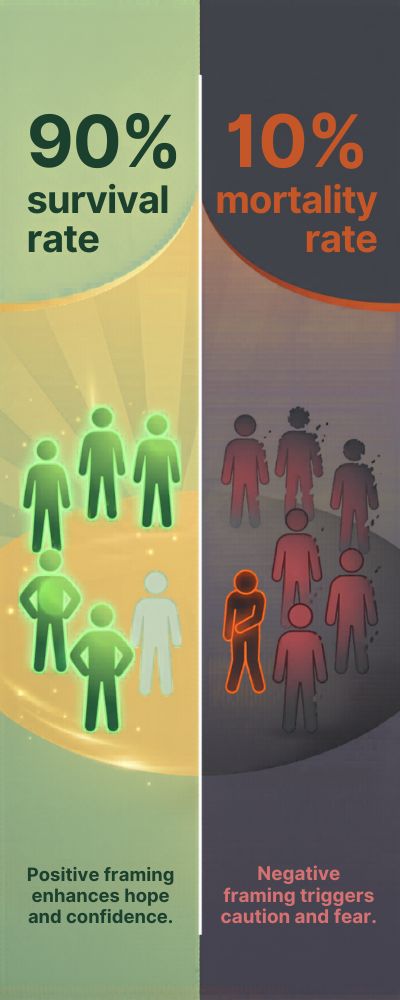

The framing of data is everything. You spin it one way and it means one thing. You spin it another way and it means another. Sometimes this isn’t done with the intent to mislead or deceive, it’s just a different perspective. But let’s not assume it is not misleading or deceptive either.

Let me give you a super simple example:

| Statistic Framing | Interpretation |

|---|---|

| “90% survival rate” | Positive, reassuring |

| “10% mortality rate” | Negative, alarming |

I’ve seen this before. Two stories, same data. The one you choose to believe depends on what you want to hear. I’ve seen this play out during the pandemic.

The Our World in Data COVID statistics demonstrate how the case fatality rate changed based on the amount of testing, the age structure of the population, and the point in time when you look at the data.

But news outlets would pick one and roll with it. I don’t blame them. It’s easier to present a more straightforward story. But just because it’s easier doesn’t mean it’s better.

When Data Influences Policy (and Impacts People’s Lives)

A lot of the time, data analysis isn’t just about a fun fact or an interesting trend. It’s used to inform policy decisions that affect our lives. Minimum wage, healthcare, and education all rely on data-driven analysis. For example, the OECD education spending data shows how much countries spend per student.

And some spend a lot per student. The implication might be that if a country spends more money, then the education outcomes will be better. But it’s not so simple. There are a lot of factors at play, such as culture, the way a program is implemented, and teacher quality.

So when I hear someone say we need to spend more on education, I wonder: more on what? Throwing more money at something isn’t a solution. It’s a guess with a budget attached to it.

The Humanity of Data (Yes, It Exists)

Data seems so impersonal, but it’s full of humanity. There are stories behind every percentage. Someone lost a job. Someone found a new one. Someone is quietly struggling but no one is graphing it.

The monthly U.S. Bureau of Labor Statistics employment report shows how employment rates steadily recovered, but it doesn’t convey the feeling of a person who can only find gig economy work or someone who is underemployed.

Perhaps that’s what we forget. Data is a summary. Data compresses. Data simplifies. But people don’t fit into spreadsheet cells.

Now What?

Perhaps the best approach is to seek a story that isn’t so clean or straightforward. Be skeptical. Ask for the data behind the narrative.

See if there’s more to the story. Ask questions when a story seems too good (or bad) to be true. Raw data is just the start. The important part, the one that actually matters, is how much you’re willing to dig beyond the data.

The Rise of Data-Driven Decisions: Are We Trusting Statistics Too Much?

You’ve likely heard the phrase tossed around in meetings, in news articles, even in heated discussions: “But the data proves that…” Only, there’s no follow-up debate. No further discussion. No critical questions.

Just an implicit assumption that we should all just go along with whatever the data has dictated. And yet, I have to wonder… when did data get the keys to our thrones?

According to a recent report from McKinsey on data-driven companies, companies that rely heavily on data are significantly more likely to acquire customers and improve profitability.

That’s great. But somewhere along the way, “data-informed” quietly turned into “data-obsessed.” And those two things are not the same.

The Illusion of Objectivity

We tend to think of data as objective. Impartial. Unbiased. But honestly? Someone decided what to track. Someone decided how to track it. And someone decided what to leave out. That’s three subjective decisions before we even opened Excel.

For example, a study cited in the Nature research on algorithmic bias found that AI-powered hiring tools inadvertently perpetuated existing biases. Why? Because they were developed using historical data. And the past… isn’t exactly equitable. So sure, the data looks “clean.” But the inputs? Not so much.

Convenience vs. Common Sense

There’s also a creeping degree of laziness here. And I say that lovingly, because I’ve been guilty of it too. It’s convenient to trust a graph. It’s convenient to follow a metric. It’s convenient to not have to overthink things.

Let me try to illustrate the difference:

| Decision Type | Data-Driven Approach | Human Judgment Approach |

|---|---|---|

| Hiring | Algorithm screening resumes | Interview intuition + context |

| Marketing | A/B testing metrics | Brand storytelling |

| Healthcare | Risk prediction models | Doctor experience |

You’d like to think that they go hand in hand. But let’s be real… they don’t. And right now, it’s data leading the charge.

When Data Gets It… Wrong

You’d like to think that data is infallible. But, we all know it isn’t. In the run up to the financial crisis, risk models based on historical data didn’t come close to predicting the kind of contagion that was possible. Just read the Financial Crisis Inquiry Report.

They weren’t short of data. They were over-confident in it. And that’s the bit that people never want to talk about. Data doesn’t scream when it fails. It just fails. Until it all falls over.

Should We Trust It Less?

No, not really. You might as well drive with a blindfold on because your GPS once sent you the wrong way. It’s not about trust. It’s about blind trust. Maybe the question we should be asking is…

Are we asking enough questions before we trust our data? Data is incredibly powerful, don’t get me wrong. But, at the end of the day it is just a tool. And tools, as far as I know, are meant to enable your thinking, not replace it.

Correlation vs. Causation: The Mistake That Still Misleads Millions

You look at two trending lines and you assume that one causes the other. You can’t help it. I’ve done it too often to count. It feels good, like you solved a mystery in two seconds flat. But just because two things trend together does not mean they’re actually related in any way.

Check out the Spurious Correlations dataset, there’s a correlation between US spending on science and suicides by hanging. That’s not insight; that’s coincidence wearing a lab coat. Yet we do it all the time.

Why Our Brains Love False Narratives

Humans are wired to look for patterns. Apparently it’s a survival thing. Look for a pattern, assume causation, take action. Great for prehistoric survival, not so great when looking at data in 2026.

The American Psychological Association published a report on cognitive bias that describes how humans are wired to look for causal explanations even when there are none.

Humans do not like random things, it feels… unsettling, almost unjust. So we create a narrative to explain it. Unfortunately, it’s not always a good narrative.

Real-World Consequences (It’s Not Just Academic)

This isn’t just an academic exercise in data analysis. It has real-world implications that are important. Let’s use health studies as an example. We’ve all seen articles like “People who drink coffee live longer.” Great, that means I can drink 3 cups, right?

Unfortunately, research by the Harvard T.H. Chan School of Public Health suggests that most of these studies are just correlations. People who drink coffee may live more healthily than others. Was it the coffee or something else? That’s a key difference.

Quick Recap (Because This Can Get Complicated Quickly)

| Concept | What It Means | Risk if Misused |

|---|---|---|

| Correlation | Two variables move together | False conclusions |

| Causation | One variable directly affects another | Requires strong evidence |

| Confounding | A third factor influences both | Hidden bias |

It’s not exactly rocket science, but it’s not quite as simple as people believe it to be either.

The Insidious Effect of Confounders

But then there are times when yes, there is a correlation, but not the one you think there is. A confounding variable has entered the mix and ruined the party. Consider that ice cream sales and drowning deaths both increase during the summer months.

Does eating ice cream cause people to drown? Of course not. The confounding variable here is temperature. You can see in the CDC’s drowning facts that there’s clearly a seasonal component to this, but that doesn’t mean ice cream is to blame (…right?)

Yet somehow, this same kind of reasoning manages to creep into more serious attempts at analysis than we’d care to admit.

So Why Do We Keep Making This Mistake?

I think the first reason is pretty simple: because it’s easier. Determining causality takes time, effort, experiments, studies, and critical thought. Determining correlation takes an hour and a fancy title. Let’s be real, though.

People often don’t want to have to say, “Well, maybe these two things are related, but we can’t be sure.” That’s not going to get your article retweeted.

The Solution (If There Even Is One)

Perhaps the solution is simply a matter of taking a moment to breathe and ask one more question: Could there be another explanation for this? I know, I know, not very sexy. But necessary. Because if we ever stop challenging correlation, that’s the exact moment when we’ll start believing fairy tales.

Big Data, Bigger Problems: When More Information Leads to Worse Conclusions

We tend to assume that more data leads to better decisions. That a larger dataset will provide more precise conclusions, which will lead to a smarter world. It makes sense. It feels right. Unfortunately, it isn’t always true. Sometimes it just means more data noise. Sometimes it just means more ways to be wrong.

According to the IBM data analytics overview, while companies and institutions are generating vast amounts of data, actually making sense of that data is one of the main obstacles to be overcome. Or, to put it more simply, we have plenty of data, but we are still in search of understanding. Ah, the irony.

The Noise Problem (Or, When Everything Looks Important)

The thing about big data is that the more data you have, the more likely you are to find patterns in it. But these may not be meaningful patterns. In fact, they may be completely random. But they could still be convincing enough to get you to believe them.

The more variables you test, the more likely you are to find something that appears statistically significant. But that may only be an accident. For instance, the Nature article on false positives in big data discusses how large datasets increase the risk of spurious findings if not handled carefully.

When Scale Hides the Human Story

Big data likes to aggregate. Millions of bits of data condensed into trends and averages and dashboards. Which is all very efficient and convenient. But it can also mask important information. For instance, analyzing social media data is the holy grail of big data. There are billions of social media users, after all.

According to the Pew Research social media statistics, billions of users generate data daily. This is what makes social media data so valuable. But can you really learn about people just by analyzing their likes and clicks and scroll time? I’m not so sure.

It seems a bit like trying to understand a conversation by counting the number of words being spoken. You miss the nuance. The context. The moments of hesitation. You miss the things that matter.

The Overfitting Trap (It Sounds Technical, But Stay With Me)

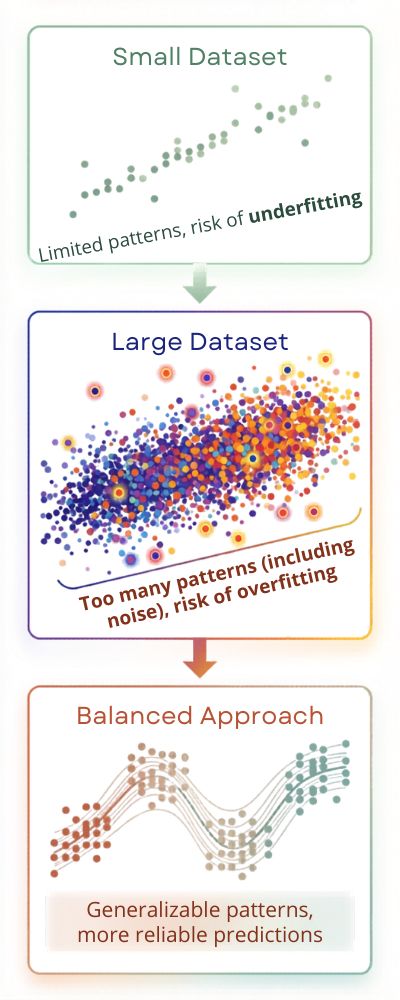

One of the other terms you may hear when people talk about the limitations of big data is overfitting. This is a technical term, but it isn’t as complicated as it sounds. Essentially, overfitting occurs when your model has learned your data too well. Including all of the noise. So what is overfitting?

| Scenario | What Happens | Result |

|---|---|---|

| Small dataset | Limited patterns | Risk of underfitting |

| Large dataset | Too many patterns (including noise) | Risk of overfitting |

| Balanced approach | Generalizable patterns | More reliable predictions |

Too much data can make your model perform worse if you are not careful. Similar to overthinking a simple problem until you no longer understand it.

When Confidence turns into overconfidence

This is the part that I worry about the most. Big data not only answers a question, it answers it confidently. Dashboards, predictions, percentages. Everything looks so precise, so polished, so convincing.

However, precision is not the same as accuracy. Despite big data, models have gotten outcomes wrong. Take for instance the 2016 US presidential elections.

As Nate Silver mentions in his article, even FiveThirtyEight struggled with uncertainty and incorrect assumptions. Yet people took the predictions as fact. What can we do about this?

Perhaps instead of using less data, we should learn to be more cautious. Perhaps we should just slow down a bit. Perhaps we should ask ourselves more often “What am I not considering here?”

Because more data doesn’t always translate to more insight. Sometimes it just gives you more opportunities to be wrong and that is a bitter pill to swallow, but I think we have to swallow it.

The Psychology of Numbers: Why Humans Misinterpret Statistics

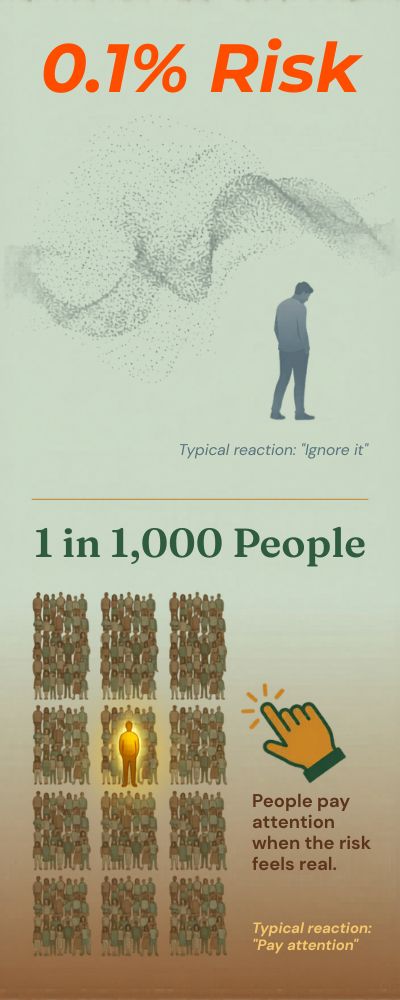

Figures seem chilly. Pristine. Neutral. Even… impersonal. However, once we process them, we start to feel them. A 2% chance? That seems negligible. “One in fifty chance.” Now it’s a little too close for comfort. Same calculation, different emotional response.

A study about risk perception by the National Library of Medicine, demonstrates that we are likely to perceive probabilities differently based on the way they are presented. Okay, so we’re clearly not walking around as pure logic machines. More like intuitive interpreters of abstract concepts.

The Brain Loves Shortcuts (Even When They Backfire)

Our brains are workaholics. Constantly sorting through, sorting out and making decisions. So we need to take shortcuts. Mental workarounds. In psychology, they’re called heuristics. I call them “mental shortcuts that screw you over.”

An article by the American Psychological Association on cognitive biases, illustrates these mental shortcuts that help us navigate, and the systematic mistakes they lead to. For instance, with the availability heuristic.

If we commonly hear about plane crashes, we are likely to think that flying is quite dangerous, even if the IATA safety report, year after year, points to aviation as one of the safest modes of transportation.

What’s going on here? Our brain is essentially saying: “If I can easily recall it, then it must be common.” Yeah, that’s not exactly a failsafe strategy.

Percentages vs. People (And Why We Get Confused)

Percentages are funny. They sound concrete, yet they don’t feel concrete.

Here’s a quick example:

| Format | How It Feels | Typical Reaction |

|---|---|---|

| “0.1% risk” | Abstract, distant | Ignore it |

| “1 in 1,000 people” | Personal, tangible | Pay attention |

These are facts. But they give a very different impression.

In health communication, I’ve come across this phenomenon a fair amount. For example, the World Health Organization cancer fact sheet frequently reports risks as a percentage. But when that percentage is converted into absolute numbers, the response is often one of alarm, or dismissal.

The Overconfidence Trap

OK, this one’s a bit awkward: We think we understand statistics more than we really do.

According to a survey cited by the Pew Research Center, most people say they are able to interpret science data, but when quizzed, they struggle with basic statistical concepts.

Which isn’t surprising, really. No-one likes to say, “Sorry, I have no idea what’s going on here.” So we read, nod, share and move on.

But actually? There’s frequently a disconnect between confidence and understanding.

When Emotions Override Logic

The last one isn’t about getting it wrong. It’s about deciding what to believe.

We see a stat that confirms something we already believe and we lap it up. We see one that undermines something we think and we question it, or we ignore it. A classic case of confirmation bias. The kind that subtly moulds our worldview without us even realizing.

And OK, this one isn’t comfortable for me either. Because we all do this. Me too.

So… Can We Get Better at This?

I guess so. But we have to try. We have to slow down. We have to ask, “What does this actually mean?” We might even have to fact-check something before we retweet it.

I know, I know. It isn’t very sexy advice.

But if data is what we base decisions on, and it is, then making sense of it isn’t optional. It’s a requirement. Even when our brains sometimes make it more difficult than it needs to be.

How Media and Governments Use (and Misuse) Statistics

A data point never really makes the news alone. It always has a t-shirt on. One that screams a headline in 40 point font. “20% more crime” or “3% increase in economy.” You read it, you respond, and boom, the narrative is born. What I worry about is that, what lies beyond that figure.

The Australian Bureau of Statistics (ABS) releases rich datasets, but journalists usually pick just one value from it and report it. They might not consider seasonal adjustments, long-term trends, or regional variation. Not because it’s not important. Because it’s messy. And messy isn’t what goes viral.

Selective Reporting (The Fancy name for Cherry-picking)

Everyone does this. Reporters. Politicians. Data analysts. You pick the metric that enhances your narrative and ‘conveniently ignore’ those that don’t. An OECD report on trust in government shows the effect of presenting data in this manner.

Highlight a metric where the data shows improvement and people are optimistic. Highlight a metric where the data shows deterioration, and it’s a crisis. Same data. Different interpretation.

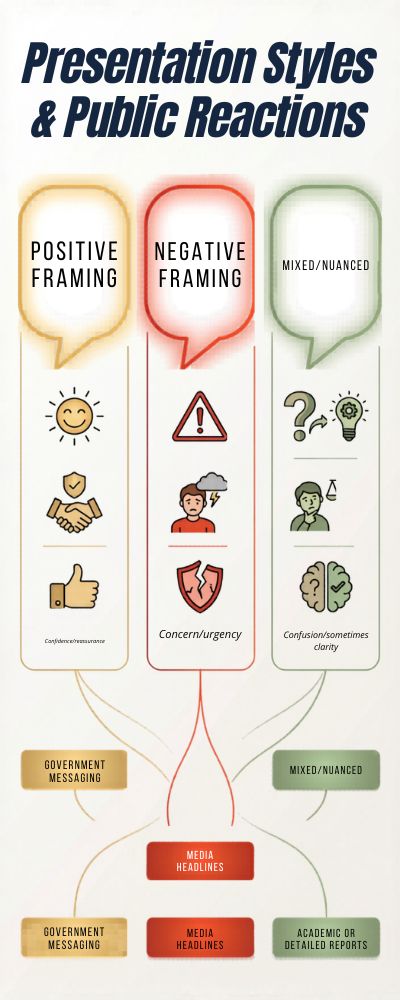

| Presentation Style | Public Reaction | Typical Use Case |

|---|---|---|

| Positive framing | Confidence, reassurance | Government messaging |

| Negative framing | Concern, urgency | Media headlines |

| Mixed/nuanced | Confusion (sometimes clarity) | Academic or detailed reports |

Yeah, nuance never wins that battle.

The Politicization of Statistics

The reason people tend to believe numbers is simple: They’re authoritative. And that’s why it’s so easy to use them to influence public opinion.

When it comes to economic growth, for instance, politicians often tout GDP growth rates as evidence of their effective governance. Except GDP growth doesn’t tell you how that growth is distributed, or whether people feel like their lives have improved.

That’s always fascinated me. Technically, you can have “growth,” but still have people suffering. And the truth can encompass both things. It’s complicated, and therefore, politically inconvenient.

The Problem with Simplification

Now, don’t get me wrong. It’s not like simplification is inherently bad. In many cases, it’s a necessary evil. The problem arises when we oversimplify.

In the case of public health campaigns, for example, data is often condensed into easily digestible messages. While you can find all sorts of detailed health data on the World Health Organization data portal, it’s often presented in the public sphere as a single percentage or risk factor.

That’s helpful. But it can also foster a false sense of certainty.

And once you’ve grabbed onto a simple metric, it’s hard to move away from it. Just try telling someone, “Well, actually, it’s a bit more complicated than that.” You can almost hear their attention trailing off.

So, Misuse or Not?

I’d say a little bit of both. The media needs clicks. Politicians need votes. Statistics are the point of leverage between fact and persuasion.

Except maybe the problem isn’t that statistics are used at all. It’s just that we almost never think about how they’re used.

If we did, it might help to create a little bit of space. Just for a moment. Long enough to ask, “Wait, what’s going on here?” Not in a paranoid way. Just a curious one.

Because the stats aren’t lying. But they’re not telling the whole truth, either. And somewhere in between, that’s where you find the truth.

Statistical Illusions: Famous Cases Where Data Fooled Everyone

Numbers are funny things. They make you feel secure. You look at graphs and percentages and trends, and you think, Well, this is obviously right. How could this be wrong? But the thing is, there are plenty of times when data completely led people astray. Even experts.

The Literary Digest Poll Of 1936

In 1936, The Literary Digest conducted a huge poll before the U.S. presidential election. More than 2 million people responded, and the results showed that Alf Landon was going to beat Franklin D. Roosevelt in a landslide. Needless to say, that’s not what happened. Instead, Roosevelt won in a landslide.

So what went wrong? The problem was sampling bias. When The Literary Digest conducted their poll, they mostly sampled richer people (those who owned cars and phones, for example). The Roper Center has a good analysis of what went wrong. Big sample, bad sample, wrong result.

The Case of the “Perfect” Prediction

Decades later, people were still making the same mistakes. Before the 2008 financial crisis, risk models indicated that mortgage-backed securities were safe. These models were data-driven and mathematically sound. They were trusted by almost everyone in the financial sector.

And yet, when the crisis hit, all of those securities became nearly worthless. As the Financial Crisis Inquiry Report details, pre-crisis models didn’t account for rare, extreme risks. The data wasn’t necessarily wrong; it was just incomplete. (And a bit too rosy.) Do you see a theme developing here?

Simpson’s Paradox (This One’s Sneaky)

This one is a bit of a brain-twister. Simpson’s paradox is a statistical phenomenon in which a trend that’s apparent in lots of different data sets somehow disappears (or even reverses) when all of those data sets are combined.

My favorite example of Simpson’s paradox comes from a medical study discussed by the Stanford Encyclopedia of Philosophy. It showed that a particular treatment had a higher success rate in general but a lower success rate when the data was separated out into different subgroups.

Here’s a simplified illustration of what I’m talking about:

| Group | Treatment A Success | Treatment B Success |

|---|---|---|

| Group 1 | 90% | 85% |

| Group 2 | 60% | 55% |

| Combined | 65% | 70% |

Wait… what?

Yeah. It sounds fishy because it is. But it’s true. And if you miss it, it completely inverts your results.

The Google Flu Trends Misfire

This one is a bit more recent. Google Flu Trends attempted to predict the onset of flu epidemics based on search data. And at first the results were encouraging, even a bit spooky. Big data in real time trumping traditional knowledge.

Then it started predicting way too many cases of the flu.

According to a study published in Science, the algorithm interpreted the increase in searches (more people searching for symptoms) as the disease spreading.

In other words, it was measuring hypochondria, not actual illness.

Which, if you think about it, is pretty human… just not very reliable.

Why Do We Keep Falling for This?

Part of it is trust. Numbers seem dependable. They give us a feeling of control over an unpredictable world.

But maybe we trust them a little too easily.

Because data is always the result of a series of choices: what to measure, what to leave out, how to measure it, what it signifies. And those choices? They’re human. Imperfect. Sometimes biased, sometimes rushed.

So What’s the Moral of the Story?

Well, it’s not “Don’t trust data.” That would be throwing out the baby with the bathwater.

It’s more like… just be a little skeptical. Not mistrustful, just… vigilant.

Because if these examples teach us anything, it’s that even the most alluring statistics can mislead us. And they almost always do it without making a sound.

The Future of Statistics in the Age of AI and Automation

It’s almost weird to contemplate. There was a time when we did math manually, then spreadsheets, and now… data analysis is not just automated, but it’s also being interpreted. Insights are being drawn. In some cases, it’s even being used to make decisions.

The PwC AI impact report states that AI could add up to $15.7 trillion to the global economy by 2030. That’s not just a number; it’s a change in the way business is done at a high level.

And yet, the thing that keeps nagging at me is… what about human judgment if the analysis is being automated?

Automation: Better, But a Little… Cold?

AI loves patterns. Give it enough data, and it will identify trends faster than any human can. No human error, no bias, etc.

But in practice, it’s a little more complicated. The NIST AI risk management framework notes that automated systems can mirror and even amplify the biases present in their data sources. So instead of removing our imperfections, we’re just magnifying them.

Kind of scary when you think about it, to be honest.

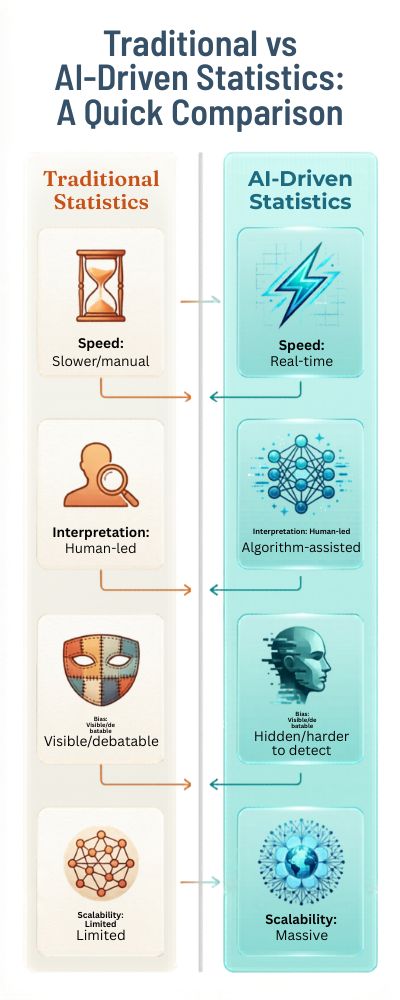

Here’s a quick overview of what I mean:

| Aspect | Traditional Statistics | AI-Driven Statistics |

|---|---|---|

| Speed | Slower, manual processes | Real-time analysis |

| Interpretation | Human-led | Algorithm-assisted |

| Bias | Visible, debatable | Hidden, harder to detect |

| Scalability | Limited | Massive |

Not because one is superior, just that they are completely different beasts.

Prediction vs Understanding

AI can do prediction exceptionally well. Whether it’s about purchasing behavior, disease spread or route optimization, it can sense patterns to an uncanny level of accuracy.

McKinsey’s State of AI in 2023 reports that more companies are applying AI to predictive analytics. And it works. In some cases, it works better.

But prediction and understanding aren’t the same thing.

You can predict what will happen without knowing why, and there is a difference. A difference that matters more than we think. Because if you don’t know why, you can’t adjust when the why changes.

The Human Element Isn’t Going Anywhere (Hopefully)

There is a silent fear that statisticians (or analysts, in general) will be redundant. I don’t think so. Not completely, at least.

Because someone still has to ask the right questions. Someone still has to formulate the problem. Someone still has to interpret the results. AI doesn’t care about the why; it only cares about the what.

The why? That’s still someone’s job. For now, at least.

So… What Does the Future Actually Look Like?

Not a replacement. More like a partnership, awkward at first, perhaps a tad one-sided, but necessary nonetheless.

We will rely on AI for its speed and scale, of course. But we will also need humans to check it, to question it, to sometimes tell it, “Hang on a minute, something doesn’t feel right.”

Because if we totally hand over the keys of statistics to automation, we will also be in danger of losing something harder to quantify but no less important, the ability to be skeptical.

And let’s be clear, a world without skepticism? It isn’t as efficient as it sounds. It’s just… dangerous.

Data Ethics and Bias: Can We Ever Achieve Truly Objective Analysis?

Objectivity has a nice ring to it. It sounds clean and fair and uncontaminated by the vagaries of humans. But I have to confess, I never bought into the idea of data that’s completely “neutral.” Not because data is the problem, but because humans are involved at every step.

Someone has to choose what to measure. Someone has to define the taxonomy. Someone has to clean the data (and make a few silent data quality decisions along the way). That’s a trio of subjective decisions even before you’ve started analyzing anything.

The UNESCO recommendations on AI ethics call out this same concern, that bias can enter at various points, from data collection to the design of the algorithm. So when we say “objective,” we really mean “we did our best.” Which, let’s be honest, isn’t quite the same thing.

Bias Isn’t Always Loud

Here’s the thing: sometimes bias isn’t even that noticeable. Sometimes it doesn’t arrive with a giant red flag. Sometimes it’s hidden, so embedded in the system you hardly even notice it’s there. For example, facial recognition.

A study by the NIST Face Recognition Vendor Test found that facial recognition systems tended to have higher error rates for certain demographics.

Not because the algorithms were actively biased or “intentionally unfair,” but simply because the training data wasn’t equally representative. And that’s the part that makes us uncomfortable. Bias doesn’t require malice. All it requires is imbalance.

When Fairness Gets Complicated

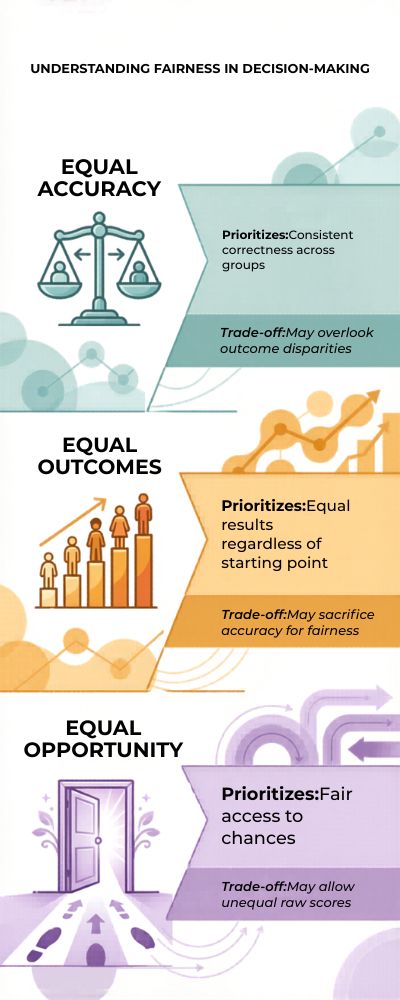

Okay, so maybe you try to address bias. Awesome. But then you hit another issue, what exactly does “fair” mean? Equal outcomes? Equal opportunity? Equal error rates?

Here’s a simplified example:

| Fairness Definition | What It Prioritizes | Potential Trade-Off |

|---|---|---|

| Equal Accuracy | Same performance for all groups | May ignore structural inequality |

| Equal Outcomes | Same results across groups | May require heavy intervention |

| Equal Opportunity | Same chances of success | Hard to measure consistently |

There is no silver bullet. Every solution comes with a trade-off. When you fix one thing, you unbalance another. It’s like trying to adjust the balance on a seesaw. You get one end evened out and the other end tips.

The Human Factor (Again… It always comes back to this)

Somewhere near the end of most projects, someone will pop up and ask, “Wait a minute… Does this even make sense?” That question is more important than we like to think.

Ethics is not just technical. It’s human. It’s about empathy. It’s about thinking of the impact of a decision on individuals, not just statistics.

The OECD AI principles call for accountability and transparency, but those concepts are worthless unless individuals embrace them. Otherwise, they are nothing more than words in a document.

So… Can We Ever Be Truly Objective?

No. And perhaps that is fine.

The pursuit of absolute objectivity may be a fool’s errand. Perhaps the objective (see what I did there?) should be awareness. We need to understand that there is bias, to challenge assumptions and to be willing to say, “This doesn’t feel right.”

Because if we claim absolute objectivity, that is when we are most likely to get it wrong.

If we accept that we are flawed, perhaps we can do a little better. Not great. Just… better.

From Spreadsheets to Predictions: The Next Evolution of Statistical Thinking

Data analytics used to be about looking backward: Last quarter’s sales, last year’s unemployment rate, the previous round of test scores. It was helpful, but also somewhat like trying to drive by looking in the rearview mirror.

Today, data analytics is about looking forward: Who is likely to buy my product next quarter? Who is likely to not pay back a loan? Which patients are in danger of complications?

It’s not hard to see why this is the case. According to the McKinsey State of AI report, predictive analytics is one of the fastest-growing areas of data analytics across a wide range of industries. We’re no longer just interested in what happened yesterday, or last quarter, or last year. We want to know what is going to happen tomorrow.

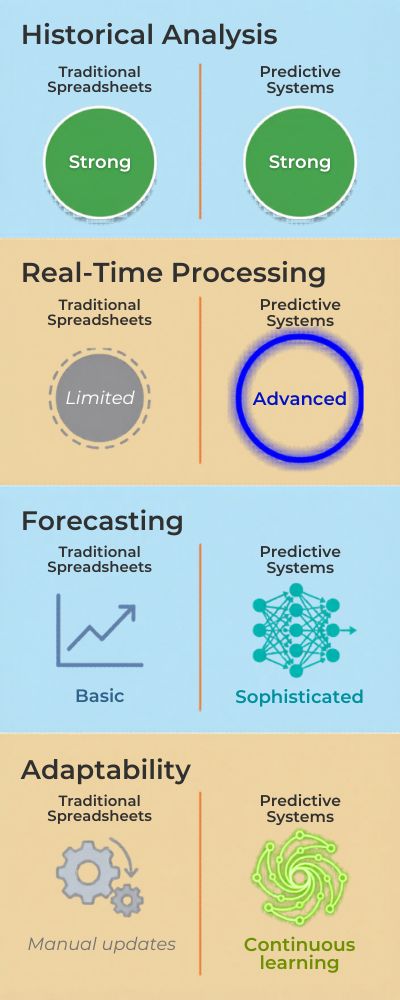

The Age of Spreadsheets (So Comfy, but so Basic)

Don’t get me wrong. Spreadsheets are fine. They are incredibly useful, and I have personally saved many a project from ruin by reaching for one at just the right moment. But they are meant for data manipulation and summarization, not prediction.

You can average values, plot data, and even do a little bit of regression analysis if you’re feeling fancy. But predict what will happen in the future in real time? Not so much.

| Capability | Traditional Spreadsheets | Predictive Systems |

|---|---|---|

| Historical Analysis | Strong | Strong |

| Real-Time Processing | Limited | Advanced |

| Forecasting | Basic | Sophisticated |

| Adaptability | Manual updates | Continuous learning |

Spreadsheets aren’t so much going away as they’re just so… sluggish.

Prediction Feels Powerful (And a Bit Addictive)

There’s something almost addictive about being able to predict the future. You input some data, fiddle with some settings, and voila! Instead of just analysis, you can anticipate. It’s kind of like a superpower.

IBM’s overview of predictive analytics describes how businesses use these models to predict future trends and make better decisions. And yeah, it’s pretty cool.

Prediction Can Be a False Sense of Security

Here’s the part that worries me. Predictive analytics can create the illusion of certainty. Like we understand more than we really do.

The Problem of “Why”

This is the part that doesn’t get talked about as much. We’re getting better and better at predicting the “what” … but not always the “why.” A predictive model might tell you that a customer is likely to churn. Great. But why? Is it because of price? Experience?

Something you never measured? If you don’t know the “why,” then you’re still just guessing … even if your guess is powered by fancy analytics.

This Harvard Business Review article on why analytics projects fail talks about the disconnect between prediction and insight. And honestly … it’s kind of annoying. You have the answers … but not the explanations.

The Future of Statistical Thinking

Statistical thinking is shifting from a mostly retrospective practice to a more prospective one. From reports to models. But perhaps the biggest shift is in our understanding of what it means to “know” something.

Does a predictive model mean you know something? Or does it just mean you have a really good guess? I’m not sure there is a straightforward answer.

What I do know is this: The predictive analytics tools are getting better and better. But if we don’t keep asking questions, seeking explanations, and every once and a while challenging the tools … then we risk becoming the passengers rather than the drivers.

Which would be a bit ironic … since we’re the ones building the car in the first place.

Global AI Market Size by 2030: How Fast Is It Really Growing?

The global AI market is projected to reach trillions in value by the early 2030s. Growth is being driven by enterprise adoption, automation, and consumer applications. However, forecasts vary widely depending on assumptions about regulation and infrastructure. Understanding these projections requires looking beyond headline numbers to the underlying drivers.

AI Contribution to Global GDP: A $15 Trillion Boost?

Some estimates suggest AI could add over $15 trillion to the global economy by 2030. This growth is expected to come from productivity gains and new business models. However, the distribution of these benefits may be uneven across regions. Developed economies are likely to capture a larger share early on.

Job Displacement vs Job Creation: The Net Impact of AI by 2035

AI is expected to both eliminate and create jobs across industries. While automation may replace repetitive roles, new positions will emerge in tech, data, and AI oversight. The key question is whether job creation will outpace displacement. Reskilling and education will play a critical role in this balance.

The Automation Index: Which Industries Are Most at Risk?

Certain industries—such as manufacturing, customer service, and logistics—are more exposed to automation. AI is rapidly improving efficiency in these sectors. However, not all roles within these industries are equally vulnerable. Hybrid jobs that combine human judgment with AI tools may prove more resilient.

AI Adoption Rates by Country: Who’s Leading the Race?

AI adoption is not uniform across the globe. Countries like the U.S., China, and parts of Europe are leading in investment and deployment. Meanwhile, emerging markets are catching up but face infrastructure challenges. These differences will shape the global balance of power in AI.

Regulation vs Innovation: How Laws May Slow (or Accelerate) AI Growth

Governments are introducing regulations to address risks like bias, privacy, and misuse. While these rules can protect users, they may also slow innovation. Some regions may adopt stricter frameworks than others. This could create fragmented AI ecosystems worldwide.

The Cost of AI Implementation: Enterprise Spending Trends

Businesses are investing heavily in AI infrastructure, talent, and tools. Initial costs can be high, but long-term savings often justify the investment. Cloud-based AI is lowering barriers for smaller companies. This is accelerating adoption across industries of all sizes.

AI Talent Shortage: How Big Is the Skills Gap?

There is a growing gap between demand for AI talent and available skilled workers. Companies are competing for engineers, data scientists, and AI specialists. This shortage is driving up salaries and influencing education trends. It may also slow adoption in some sectors.

The Rise of AI-Augmented Workforces

Instead of fully replacing workers, many companies are adopting AI to augment human roles. Employees use AI tools to increase productivity and efficiency. This hybrid model is becoming the dominant approach. It shifts the focus from replacement to collaboration.

AI in Healthcare: Forecasting Adoption and Impact

AI is expected to transform healthcare through diagnostics, treatment planning, and automation. Adoption is growing but faces regulatory and ethical hurdles. The potential benefits include improved outcomes and reduced costs. However, trust and data privacy remain key concerns.

AI and Education: Personalized Learning at Scale

AI is reshaping education by enabling personalized learning experiences. Students can receive tailored content and feedback. This could improve outcomes and accessibility worldwide. However, it also raises questions about data use and the role of teachers.

Energy Consumption of AI: The Hidden Cost of Growth

As AI models grow more complex, their energy demands are increasing. Training large models requires significant computational resources. This has environmental implications that are often overlooked. Sustainable AI development is becoming a critical issue.

AI in Cybersecurity: Defense vs Offense Statistics

AI is being used both to defend against and carry out cyberattacks. Organizations are deploying AI for threat detection and response. At the same time, attackers are using AI to create more sophisticated threats. This creates a constantly evolving arms race.

Consumer AI Adoption: How Many People Will Use AI Daily by 2030?

AI is expected to become part of everyday life for billions of users. From virtual assistants to recommendation systems, usage is expanding rapidly. Daily interaction with AI may become the norm rather than the exception. This will reshape digital behavior globally.

The Rise of Autonomous Systems: From Cars to Factories

Autonomous technologies powered by AI are advancing quickly. Self-driving vehicles and automated factories are key examples. Adoption will depend on safety, regulation, and public trust. These systems could significantly change transportation and manufacturing.

AI Startups and Investment Trends

Investment in AI startups continues to grow year over year. Venture capital is flowing into areas like generative AI, robotics, and automation. This fuels innovation but also creates market competition. Not all startups will survive, leading to consolidation over time.

The Role of Open vs Closed AI Models in Future Growth

There is an ongoing debate between open-source and proprietary AI models. Open models encourage innovation and accessibility. Closed models offer control and monetization opportunities. The balance between the two will shape the future AI landscape.

AI Bias and Fairness: Measuring Progress Over Time

Efforts to reduce bias in AI systems are increasing. However, measuring fairness remains complex. Progress is being made, but challenges persist. Transparency and accountability will be key to building trust.

Global AI Infrastructure: Data Centers and Compute Power Growth

AI growth depends heavily on infrastructure like data centers and GPUs. Investment in compute power is accelerating worldwide. This infrastructure race is becoming a strategic priority for governments. It will determine who can scale AI capabilities effectively.

Conclusion

If there is one thing that keeps rearing its ugly head, it’s this: data is powerful, but it’s not smart. It requires analysis, criticism, and perspective… and yeah, sometimes a gut check.

In the next ten years we can expect more accurate predictions, quicker analysis, and more precise models. But precise without perspective? That’s a very bad bet.

We are not moving to a world where people are overtaken by statistics, we are moving to a world where we will have to work alongside them more intimately.

That will require even better analysis, earlier recognition of bias, and an even more critical eye. Because ultimately, statistics do not predict our future. We do. The question is, will we use statistics as a guide… or slowly cede control.

Sources:

- Spurious Correlations

- World Bank data on poverty

- Our World in Data COVID

- Bureau of Labor Statistics

- Harvard T.H. Chan School of Public Health

- IBM data analytics overview

- Nature article on false positives

- Pew Research social media statistics

- National Library of Medicine

- Australian Bureau of Statistics (ABS)

- GDP growth

- World Health Organization

- Stanford Encyclopedia of Philosophy

- Google Flu Trends

- PwC AI impact report

- NIST AI risk management framework

- NIST Face Recognition

- OECD AI principles